Table of Contents

- Introduction – why GPT 5.4 vs Gemini matters

- GPT 5.4 and Gemini models – core capabilities explained

- Performance and reasoning benchmarks – how smart are they really?

- Pricing, value and total cost of ownership for GPT 5.4 and Gemini

- Multimodality, context window and user experience

- Deployment, privacy, integration and practical selection in the Australian ecosystem

- Practical tips – how to choose the right model for your use case

- Conclusion – which platform offers the most value right now?

Introduction – why GPT 5.4 vs Gemini matters

If you are building with AI in 2026, the GPT 5.4 vs Gemini question is hard to avoid. Both stacks look powerful on paper, but they behave very differently once real users and real Australian workloads hit them (especially when those workloads are wrapped in a secure Australian AI assistant instead of a generic global chatbot).

For founders, product managers and IT leaders, this is not a purely technical decision. The model you choose shapes your costs, latency, how safe your outputs feel to customers and even which staff you need to hire – particularly if you are planning managed rollouts via specialised AI implementation services rather than ad hoc experiments.

OpenAI’s latest GPT‑4.1 and GPT‑4.1‑mini models now sit opposite Google’s newest Gemini Pro and Flash tiers. The clash spans reasoning, speed, multimodal support, context windows, pricing and enterprise controls, with trade-offs already visible in public comparisons like ChatGPT‑4.5 vs Gemini analyses that track how theory collides with messy production reality.

The focus is value. Where each platform shines, where it stumbles, and what that means for Australian startups and mid-sized enterprises looking to ship serious AI assistants, copilots and automation. By the end, you should know which stack deserves to be your default – and when a hybrid approach (often orchestrated through custom AI automation and routing) makes more sense.

https://openai.com/index/introducing-gpt-5-4

GPT 5.4 and Gemini models – core capabilities explained

GPT 5.4 is OpenAI’s flagship frontier model for 2026, built for professional knowledge work, deep reasoning, and complex, agentic workflows that use tools and can, when paired with an appropriate agent system, operate a computer via screenshots and simulated mouse and keyboard commands. It builds on GPT 5.2 and 5.3 with sharper logic, stronger coding and lower hallucination rates while keeping the same general API surface, which makes it a natural upgrade path from earlier deployments like those compared in GPT‑5.2 vs Gemini 3 Pro guides.

Google’s Gemini family now tops out with the Gemini 1.5 Pro and 1.5 Flash models, with Pro at the high end and Flash as a cheaper, speed‑optimized tier. These models are natively multimodal: they can take in text, images, audio and video in a single prompt and reason across all of them without special hacks, a design reflected in public overviews of the Gemini model family. They plug straight into Google’s Vertex AI platform, which matters a lot if you already live in Workspace or BigQuery.

The most important structural difference is this: GPT 5.4 is tuned around deep reasoning and agentic behaviour, while Gemini is tuned around scale, context and rich media. GPT shines when you want a model to plan, break down a problem and call tools in the right order. Gemini shines when you need it to chew through gigantic piles of text, spreadsheets, images or clips and respond fast – something that becomes even more compelling if your workflows are orchestrated through professional AI consulting and integration services.

Both line-ups have lighter and heavier modes, and both have API and enterprise flavours, but practical comparisons usually pin GPT 5.4 (and its Pro / Thinking style variants) against Gemini 2.5 Pro and Gemini 3 Pro or 3.1 Pro, because those are what most serious products end up using – and what head‑to‑head reviews like the detailed GPT‑5 vs Gemini 2.5 Pro comparison focus on.

https://lyfeai.com.au/post-sitemap.xml

Performance and reasoning benchmarks – how smart are they really?

OpenAI-backed evaluations report that GPT-5.4 sets a new state of the art on the GDPval benchmark, which measures performance on 1,320 real-world tasks across 44 knowledge-work occupations, and that it matches or exceeds human professional output in about 83 percent of comparisons. In those internal studies, 5.4 reaches around 83 percent, well above GPT 5.2, and shows far In those internal studies, 5.4 matches or exceeds industry professionals on GDPval roughly 83 percent of the time—well above GPT 5.2’s roughly 71 percent—and makes substantially fewer errors overall, especially on complex multi-step tool and agent-style workflows.aol.com/articles/openais-chatgpt-5-1-versus-102230560.html”>ChatGPT 5.1 vs Gemini 3 summaries). It also scores strongly on legal and professional exams like Humanity’s Last Exam, which mixes law, medicine and high-stakes reasoning.

Gemini 3 Pro and 3.1 Pro answer back with their own trophy cabinet. Google positions them at or near the top of many public leaderboards, including academic reasoning and web development style tests. Third-party coverage notes that Gemini 3 Pro performs extremely well on complex coding, math and multimodal reasoning tasks, sometimes edging out rivals on pure benchmark scores, a picture echoed in roundups comparing Gemini 3 with ChatGPT 5.1 on coding and logic tasks from sources such as AceCloud’s Gemini 3 vs ChatGPT 5.1 analysis.

Benchmarks are useful but narrow. For day-to-day business work, two other patterns matter more: error rates and robustness in long chains. GPT 5.4 introduces better “honesty” around what it does not know and records around one third fewer hallucinations than its direct predecessor, especially when using tools. That tends to show up as fewer weird edge-case failures when an agent is booking flights, touching production data or generating code patches – precisely the sort of reliability you want when you build internal copilots or route requests between lighter and heavier OpenAI models.

Gemini models, by contrast, have slightly higher hallucination risk when they are not grounded in a live data source, but they are very strong once you plug them into Google Search or your own knowledge base through Vertex connectors. They’re also optimised for high-concurrency workloads and can scale efficiently across many simultaneous sessions, which can matter more than a few benchmark points when you have thousands of Australian staff using an internal AI assistant at once. However, some experts argue that framing Gemini’s performance this way understates the practical impact of its ungrounded hallucination risk. In real deployments, teams don’t always have perfect connectors wired up or complete, well‑structured knowledge bases ready to go, and there are plenty of workflows – like quick ideation, speculative analysis, or offline environments – where you can’t reliably lean on Google Search or Vertex. In those cases, a model that behaves more predictably out of the box can be more valuable than one that’s faster or more scalable on paper. There’s also a concern that over‑reliance on live data sources can mask deeper model‑level issues: if grounding becomes a crutch, you may end up with a system that looks solid in demos but fails in edge cases where connectors break, APIs rate‑limit, or data quality dips. From this angle, organizations might prioritize models with stronger base‑level reliability, even if they give up a bit of speed or concurrency headroom.

Pricing, value and total cost of ownership for GPT 5.4 and Gemini

Cost is where the two platforms pull furthest apart. GPT 5.4 sits at the premium end of the market. Public pricing places standard GPT 5.4 input at 2.50 USD per million tokens and output at 15 USD per million tokens, while higher-end 5.4 Pro / Thinking tiers are available but do not yet have publicly listed per-token pricing in OpenAI’s official pricing.). OpenAI does discount cached input and batch jobs, but you are still paying a clear “frontier model tax”.

Gemini’s Pro tiers, especially 2.5 Pro and the 3.x series, usually land cheaper per million tokens. Earlier 2.5 Pro pricing floated near 1.25 USD per million input tokens, with aggressive cuts on high-volume Flash-style tiers. Google has quite openly chased the “value” position: good-enough performance at much lower marginal cost, especially for input-heavy workloads like summarisation of long legal contracts or call transcripts, a theme that shows up in many “Gemini vs GPT” cost breakdowns, including video explainers such as this YouTube comparison.

For a small startup with a few thousand queries per month, that gap might feel minor. Once you hit millions of calls per day across a contact centre or a national operations team, it becomes brutal. Over a year, using GPT 5.4 everywhere could cost two to five times more than an equivalent Gemini setup, even before infrastructure and data platform charges – unless you are deliberately routing only the most complex requests to GPT tiers, as in guides like GPT 5.2 Instant vs Thinking cost models for SMBs.

The catch is that value is not only unit price. If a more expensive GPT 5.4 agent completes complex, high-ROI tasks in fewer steps and with fewer failures, it might still win on outcome-per-dollar. Many Australian organisations end up in a hybrid pattern: lean on Gemini for high-volume, simple or multimodal tasks and reserve GPT 5.4 for specialised agents in finance, legal, engineering or strategy where one mistake hurts far more than a higher API bill, often formalised in internal playbooks and usage terms.

https://sojenica.rs/openai-s-chatgpt-5-1-versus-google-s-gemini-3-how-34/

Multimodality, context window and user experience

The biggest technical gap between GPT 5.4 and Gemini today is how they handle multimodal inputs and long context. Gemini 2.5 Pro introduced context windows up to one million tokens in some modes, and Gemini 3.x continues that trend. That means you can drop in hundreds of pages of policy, code, emails and screenshots and still have room for your prompt and answer, a capability widely noted in public Gemini documentation.

OpenAI has pushed its own context limits upward, with GPT 5.4 supporting long prompts well beyond the old 128k ceiling in some enterprise variants, but it still trails Gemini’s headline numbers. More important than the raw token count, though, is how the models behave at those extremes. Both platforms see some quality drop at very long lengths, but Gemini appears more stable when chewing through truly huge bundles of mixed media – exactly the sort of workload that benefits from specialist guidance on model and context-window selection.

In terms of modalities, Gemini remains the more “native” multimedia engine. It accepts text, images, audio and video within the same request, and is comfortable analysing video content, extracting structure from screenshots, or pairing voice instructions with on-screen information. GPT 5.4 supports text plus high-resolution images and documents and can control a computer using vision, but full audio and video I/O are still more limited or roadmap-heavy.

For end-user experience, that translates into clear patterns. If you want a customer service bot that can read a PDF bill, look at a photo of a faulty NBN modem and understand a voice note, Gemini is the obvious fit. If you want an engineering copilot that writes long, coherent plans, refactors codebases and calls internal tools in a disciplined way, GPT 5.4 is usually easier to steer and gives more “human-like” explanations when something goes wrong – particularly when embedded inside tailored AI assistants and automation flows.

https://docsbot.ai/models/compare/gpt-5/gemini-2-5-pro

Deployment, privacy, integration and practical selection in the Australian ecosystem

From a deployment point of view, both OpenAI and Google now feel “enterprise ready”, but they plug into different worlds. GPT 5.4 is accessible through the OpenAI API and also through Azure OpenAI Service, which is deeply woven into Microsoft’s stack: Office, Teams, Dynamics and the rest. For companies already standardised on Azure and Microsoft 365, adding GPT is usually a short hop, especially when combined with a locally hosted Australian AI assistant layer that handles identity, logging and guardrails.

Gemini flows through Google Cloud and Vertex AI. It links natively with Google Workspace, Gmail, Docs, Sheets and BigQuery and can sit inside existing data pipelines with less glue code. For Australian businesses that have standardised on Google Workspace, this difference can knock weeks off initial rollout and cut ongoing integration pain, particularly if they lean on professional deployment and integration support.

Both vendors advertise strong privacy and compliance stances. Enterprise tiers promise that your prompts and data are not used to train the public models, with retention windows in the 30 to 72 hour range mainly for abuse and operations. Each can support Australian data residency needs for enterprise customers — OpenAI via its data residency controls and its sovereign infrastructure partnership with NEXTDC in Sydney, and Google via its owned regions in Sydney and Melbourne — which can assist with Privacy Act and data sovereignty demands and broadly aligns with the expectations covered in many enterprise-focused comparisons of GPT and Gemini for regulated sectors in Australia.

Even so, it is wise to look beyond the glossy marketing pages. You still need proper data processing agreements, internal access controls and logging. For highly regulated sectors in Australia, such as health, finance and government, many teams build a layer on top that shields the raw models behind stricter policies, caching and redaction. From that vantage point, GPT 5.4 and Gemini are tools inside a broader architecture rather than the whole solution – an architecture that can be significantly simplified if you adopt an opinionated pattern like the Lyfe AI services stack.

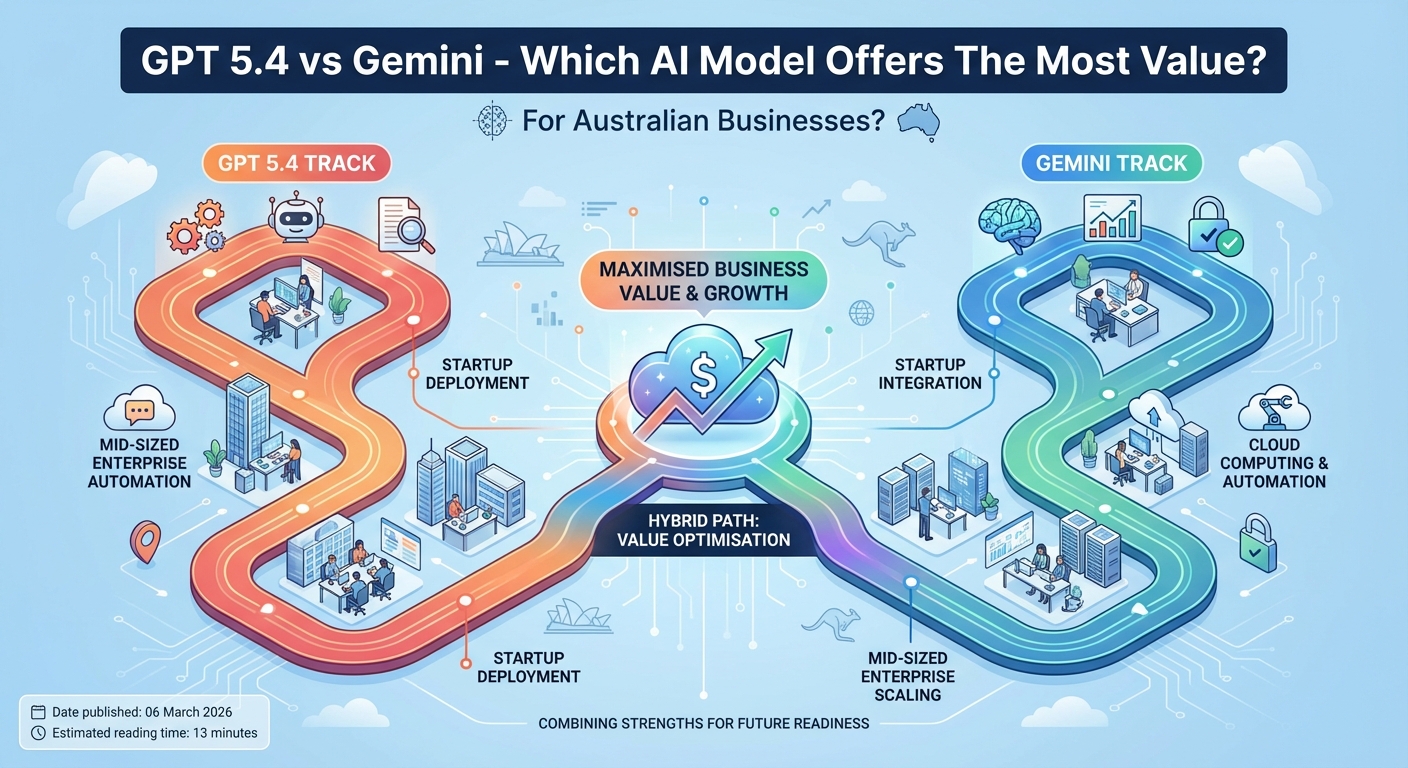

If you are an Australian founder or digital lead sitting in front of a blank architecture diagram, the GPT 5.4 vs Gemini fork can feel oddly personal. A practical path is to categorise workloads into high-stakes reasoning, high-volume routine work and multimodal experiences, then line those buckets up against the strengths already outlined above: GPT 5.4 for accuracy-critical, agentic reasoning; Gemini for large, mixed-media and cost‑sensitive flows.

https://openai.com/index/introducing-gpt-5-4

Practical tips – how to choose the right model for your use case

For the first bucket of high-stakes reasoning workloads, GPT 5.4 usually deserves to be your default. Its stronger agent performance, lower hallucination rates and more “honest” failure modes simply lower your risk surface. This is where paying more per token often makes real business sense, especially if your internal routing (as in the GPT 5.2 Instant vs Thinking routing approach) sends only the hardest problems to your most expensive models.

For high-volume routine work and multimodal experiences, Gemini is very hard to beat on cost-performance, especially once you lean into its big context window and mixed-media skills. An internal knowledge assistant for a 2,000-person Australian company, hitting tens of millions of tokens a day, can quickly blow a hole in your budget on GPT 5.4 but stay well within limits on Gemini.

The final tip is simple but often ignored: run your own bake-off. Use the free or cheap tiers of ChatGPT and the Gemini apps to test with your real prompts, your own data and your own latency targets. Log metrics like task success rate, time to correct answer and user satisfaction. Most teams discover that a hybrid stack – Gemini for the “everyday grind”, GPT 5.4 for the gnarly edge cases – gives them the best mix of speed, safety and spend, a pattern echoed in earlier GPT‑5.2 vs Gemini 3 decision guides.

https://openai.com/index/gdpval/

Conclusion – which platform offers the most value right now?

When you zoom out, the pattern is fairly clear. Gemini is the value workhorse for large-scale, multimodal and context-heavy assistants, while GPT 5.4 is the precision tool for deep reasoning, complex agents and accuracy-critical tasks. Neither is “better” in every sense; they simply optimise for different trade-offs – a framing that will almost certainly carry forward as future releases like GPT‑6 or Gemini 4 arrive, much as we have seen across earlier GPT vs Gemini comparison cycles.

For most Australian organisations, the smartest move is not to crown a single winner but to design a stack that can use both when it makes sense. Start with Gemini for broad coverage where cost, speed and media flexibility matter most. Layer GPT 5.4 into the slices of your product or workflow where one strong answer is worth far more than a slightly higher bill – ideally through a centralised assistant or platform such as Lyfe AI that can juggle multiple models behind one interface.

The teams that will win this decade are not the ones who picked a side early. They are the ones who treat models as interchangeable parts, constantly measure value in production and swap components as the landscape shifts. If you start building that flexibility now, you will be ready no matter what GPT 6 or Gemini 4 look like in a year’s time, particularly if you keep an eye on evolving model families (from OpenAI announcements to specialised breakdowns like Lyfe AI’s O4‑mini vs O3‑mini comparison).

Frequently Asked Questions

What is the main difference between GPT 5.4 and Gemini for business use?

GPT 5.4 is OpenAI’s latest flagship model focused on strong reasoning, coding and structured workflows, while Gemini focuses heavily on tight integration with Google’s ecosystem (Search, Workspace, YouTube, etc.) and fast multimodal responses. For Australian businesses, GPT 5.4 usually wins on complex reasoning and agent-style use cases, whereas Gemini can be better if you’re deeply invested in Google tools or need fast, lightweight interactions at scale.

Which is better for Australian businesses, GPT 5.4 or Gemini?

For most Australian startups and mid-sized enterprises, GPT 5.4 generally offers better value for mission-critical assistants, internal copilots and complex automations because of its reasoning quality, ecosystem maturity and strong tooling. Gemini can be the better option if your workflows are tightly tied to Google Workspace, you rely heavily on YouTube/Search data, or you need very low-cost, high-volume summarisation and chat. Many teams get best results by using both through a routing layer, which is what LYFE AI specialises in implementing.

Is GPT 5.4 cheaper than Gemini for everyday AI tasks?

Costs depend on the specific tier (frontier vs mini/flash) and how you structure prompts, but there isn’t a universal “cheaper” winner. GPT 5.4 mini-style models often compete well on cost per useful token for chat, coding and knowledge tasks, while Gemini Flash tiers are designed for very low cost, high-speed workloads like summarisation and Q&A. A proper cost comparison should look at total cost per task (including latency, retries and staff time), which LYFE AI can benchmark against your real data and usage patterns.

Which model is better for multimodal AI, GPT 5.4 or Gemini?

Both GPT 5.4 and the latest Gemini models support multimodal input (text, images, and in some tiers audio/video), but they have different strengths. GPT 5.4 tends to shine in reasoning over complex documents and combining text with structured outputs for workflows, while Gemini is strong when working with web-linked content and YouTube-style media. The right choice depends on whether you’re primarily processing business documents and internal systems (GPT 5.4 is often better) or public-facing media and search-linked content (where Gemini can be more natural).

Is GPT 5.4 more accurate than Gemini for complex business questions?

For complex reasoning, multi-step planning and code-related questions, GPT 5.4 usually delivers more consistent and reliable answers in independent benchmarks and early production tests. Gemini can still perform well, especially on general knowledge and search-adjacent questions, but may require more prompt tuning to avoid shallow or overconfident responses on specialised business logic. In practice, many Australian teams use GPT 5.4 as the default for critical decisions and route only simple or low-risk queries to cheaper models.

How do GPT 5.4 and Gemini compare for building internal AI assistants and copilots?

GPT 5.4 is often preferred for internal copilots because of its reasoning, code-writing ability and strong support for tool-calling and workflows. Gemini can still power internal assistants, especially in Google-based environments, but may require more custom glue to integrate with non-Google tools and on-prem systems. LYFE AI typically designs assistants that can call both GPT 5.4 and Gemini behind the scenes, selecting whichever model is best for the particular internal task (e.g. coding vs calendar/email vs document analysis).

Which AI model is better for secure Australian data and compliance, GPT 5.4 or Gemini?

Both OpenAI and Google offer enterprise-grade controls, but neither is automatically “compliant” for your specific Australian regulatory needs out of the box. The key is how the models are hosted, where data is stored, what logging is enabled, and how access is controlled, often via a secure Australian AI layer in front of the models. LYFE AI implements GPT 5.4, Gemini and other models within a compliant, Australian-hosted assistant environment so that data residency, privacy and governance are handled correctly regardless of which model you use.

Can I use GPT 5.4 and Gemini together in the same application?

Yes, you can route different tasks to GPT 5.4 or Gemini based on cost, latency and quality requirements, and this is often the highest-value approach. For example, you might use GPT 5.4 for complex reasoning, document workflows and coding, while sending quick FAQs, summaries or Google-ecosystem tasks to Gemini. LYFE AI builds custom routing and orchestration layers that automatically choose the best model per request so you don’t have to hardwire everything yourself.

How do I decide whether to start with GPT 5.4 or Gemini for my first AI project?

Start by mapping your core use cases: if they involve deep reasoning over business data, coding, or complex workflows, GPT 5.4 is usually the better first choice. If your main need is to layer AI over Google Workspace, Search or YouTube with fast, lightweight responses, Gemini can be a strong starting point. LYFE AI typically runs a short discovery and pilot phase where both GPT 5.4 and Gemini are tested on your real workloads so you can see actual performance, costs and risks before committing.

What does LYFE AI actually do with GPT 5.4 and Gemini for Australian companies?

LYFE AI designs, builds and manages secure AI assistants, copilots and automations that sit on top of models like GPT 5.4 and Gemini. This includes choosing the right model mix, handling data privacy and Australian compliance, integrating with your existing tools (CRMs, ERPs, Google/Microsoft stacks) and monitoring quality and costs over time. Instead of you trying to tune prompts manually in multiple dashboards, LYFE AI turns these models into stable, production-ready systems tailored to your business.