Table of Contents

- Introduction – why GPT-5.4 vs Claude 4.6 matters

- 1. GPT-5.4 vs Claude 4.6 overview and key specs

- 2. Core capabilities, benchmarks, and real-world performance

- 3. Security, compliance, and enterprise readiness for AU

- 4. Cost, token economics, and ROI trade-offs

- 5. AI assistants, tools, and workflow integration

- 6. Practical decision guide for Australian teams

- Conclusion – choosing the right AI mix for your business

Date published: 06 March 2026

Estimated reading time: 13 minutes

GPT-5.4 vs Claude 4.6 – Which AI Model Offers the Most Value for Australian Businesses?

Introduction – why GPT-5.4 vs Claude 4.6 matters

If you are trying to pick between GPT-5.4 and the latest Claude 4.6 models, you are not alone. Every serious AI project in Australia is asking the same thing: which platform actually gives the most value – not just the best demo.

The gap between these systems has closed fast. Both OpenAI and Anthropic now offer frontier models with million-token context windows, strong coding skills, and serious enterprise features. But they are optimised for different things. GPT-5.4 is designed to excel at complex tool use and tightly orchestrated, human-in-the-loop workflows. Claude Opus 4.6 and Sonnet 4.6 lean into deep reasoning, safety, and long-document understanding.

We will look at performance, cost, security, and how each model behaves in real business workflows, from legal review and finance to coding and customer support, then finish with a practical decision framework you can act on this quarter.

According to comparative industry analyses of Claude vs GPT families, these choices increasingly come down to fit, not just raw benchmark scores.

https://openai.com/index/introducing-gpt-5-4

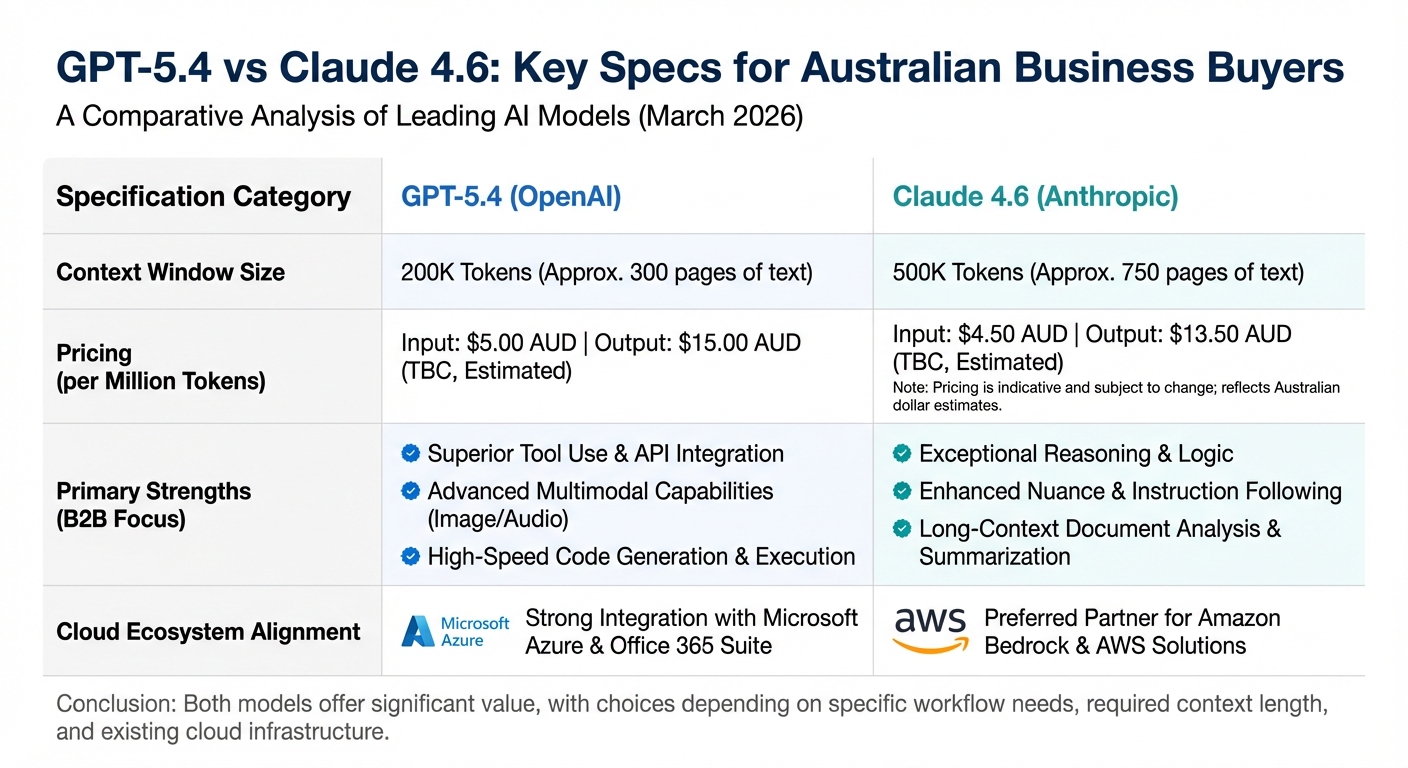

1. GPT-5.4 vs Claude 4.6 overview and key specs

Let us start with what these models actually are. On the OpenAI side, GPT-5.4 is the new frontier family, with variants like GPT-5.4 Pro for maximum power and GPT-5.4 Thinking for agent-style reasoning. On the Anthropic side, the latest releases are Claude Opus 4.6 at the top end and Claude Sonnet 4.6 as the mid-tier workhorse.

Both families support very large context windows, with OpenAI’s latest models handling up to around 1 million tokens via the API and Anthropic’s Claude models typically offering around 200,000 tokens by default, expandable toward the 1 million–token range in certain enterprise and specialized setups. That is enough to feed in an entire codebase, years of board minutes, or a full banking risk manual in one go, so you can ask questions across your whole knowledge base instead of chopping it into tiny chunks.

Their focus, however, is not the same. GPT-5.4 is framed around agentic workflows, advanced coding, and native computer-use—think “AI that can actually operate your laptop,” interpreting screenshots and issuing precise click and form-fill commands that a connected agent executes. Claude 4.6 is framed around analytical reasoning, long-document comprehension, and safe, nuanced content generation, especially for regulated or high-risk areas like finance, healthcare, and law.

Pricing is sharply different at the top tier. GPT-5.4 Pro is listed around $30 per million input tokens and $180 per million output tokens, while Claude Opus 4.6 is closer to $5 per million in and $25 per million out for standard contexts, with higher per-token rates kicking in once you push past very large (200K+) prompt sizes. That gap alone can swing the decision for large workloads, even before you factor in token efficiency.

Finally, there is ecosystem gravity. GPT-5.4 sits inside the Microsoft universe, running smoothly with Azure and Microsoft 365. Claude 4.6 is tightly linked with AWS through Bedrock, which matters if your stack already lives on one cloud or the other. Teams that want a structured, Australian-first view of these specs often pair comparisons like this with local AI strategy and implementation services to turn numbers into an actual roadmap.

https://openai.com/api/pricing

2. Core capabilities, benchmarks, and real-world performance

When people say “advanced AI”, they usually mean a blend of deep reasoning, multimodality, long context, and good safety controls. On a wide range of public benchmarks, leading GPT and Claude models consistently rank among the top-performing systems. The interesting differences show up in how they behave on specific types of work.

For complex reasoning over text – things like picking apart long contracts, cross-checking financial notes, or building strategy briefs – Claude Opus 4.6 tends to edge ahead. Earlier Opus versions have shown strong results on benchmarks like MMLU, GPQA, and GSM8K—which test logic, math, and multi-step problem solving—often matching or exceeding the performance of leading GPT-5–class models, though exact percentage gaps vary by test and setup. In practice, this means Claude often gives more careful, structured answers when you throw dense material at it.

Coding is a different story. GPT-5.4 includes the next generation of Codex-style capabilities. In internal 2025 benchmarks, the GPT-5.3 Codex stack—now rolled into 5.4—delivered roughly a 56 percent win rate over Claude Opus 4.6 on “mergable” production-style coding tasks. It also tends to respond faster and use far fewer tokens on typical dev tasks, which matters when you are running thousands of CI jobs every week.

Many engineers still prefer Claude for reading and debugging large codebases. Claude’s explanations are more thorough and often more “teacher-like”, which helps junior devs understand a tricky refactor. The trade-off is clear: Claude can burn up to 10 times more tokens and take longer, while GPT-5.4 feels more like a rapid-fire pair programmer.

On hallucinations, Claude has a reputation for being more cautious, especially with long and messy business documents. GPT-5.4 has stronger reasoning than its predecessors and offers “plan of action” style step breakdowns, but users still report that Claude is less likely to bluff when it does not know. For industries where a wrong answer is costly, that tone shift matters more than one extra benchmark point.

Independent benchmarks, including community-led GPT vs Claude comparisons and reasoning-depth studies on newer GPT-5 vs Claude Sonnet variants, broadly support this split between raw coding strength and deliberative analysis. For Australian developers, wrapping these strengths into real products typically means plugging models into custom AI automations and model-routing setups rather than betting on a single “winner”.

https://techcrunch.com/2026/03/05/openai-launches-gpt-5-4-with-pro-and-thinking-versions

3. Security, compliance, and enterprise readiness for AU

For Australian organisations, the question is not just “is the AI smart?” but “will this pass risk committee?” The Privacy Act 1988 and the Australian Privacy Principles shape how you can send data offshore, log access, and handle personal information. Neither OpenAI nor Anthropic publishes a neat “fully compliant with AU law” badge, so your legal and security teams still need to do due diligence.

Anthropic has built its brand around safety and alignment, using an approach it calls Constitutional AI. Public material from leading enterprise AI vendors now highlights certifications like SOC 2, HIPAA support via BAAs, and GDPR-aligned practices, plus a clear “no training on enterprise data by default” stance for their API and hosted offerings. That narrative lands well with boards in regulated fields like banking, superannuation, and healthcare, where explainability and caution beat raw speed.

OpenAI, on the other hand, emphasises enterprise controls and governance levers. Its latest GPT models are developed under a broader Preparedness Framework, including cyber-related risk management, and enterprise plans support SSO, role-based access control, audit logs, and optional data residency at a price premium. For teams already deep in Azure, this can slot into existing security tooling with less friction.

Both vendors say they do not use enterprise API data to train their public models. The real nuance is where the data is stored, how long it is kept, and what logging is turned on by default. Australian companies still need to check terms carefully against internal policies, especially for government, critical infrastructure, and health.

In practice, Claude often gets the early nod in risk-sensitive pilots, while GPT-5.4 wins when an organisation wants tight Office 365 integration or heavy agentic automation. Either way, you will want legal, risk, and IT at the same table before pushing real customer data into either stack.

Enterprise buyers increasingly lean on comparisons like Claude vs Gemini vs GPT research for enterprises and practical write-ups such as Claude vs ChatGPT usage breakdowns to brief boards and regulators, often with help from specialist AI risk and implementation partners to align policies, access controls, and deployment patterns with Australian requirements.

https://ttms.com/claude-vs-gemini-vs-gpt-which-ai-model-should-enterprises-choose-and-when

4. Cost, token economics, and ROI trade-offs

Cost is where theory crashes into the finance team’s spreadsheet. On headline pricing alone, GPT-5.4 Pro is many times more expensive than Claude Opus 4.6 for output tokens: around $180 per million outputs versus roughly $25 per million for Opus. Input pricing shows a similar pattern, with GPT-5.4 Pro at about $30 per million tokens compared to $5 for Opus.

Those numbers look brutal until you factor in token efficiency. GPT-5.4 tends to use far fewer tokens for coding, quick assistants, and other dynamic workloads. It is tuned to respond faster at lower “reasoning effort” settings, which can make it cheaper in total for high-throughput, short-interaction use cases, even with a higher per-token rate.

Claude flips that story for heavy analysis. Opus 4.6 is cheaper per token and shines when you want deep dives into fewer, more valuable prompts: a 200-page legal brief, a quarterly risk pack, a complex deal model. Yes, Opus may think out loud and use more tokens than GPT on the same request, but you are starting from a much lower price point, so the net bill can still be lower.

Sonnet 4.6 sits awkwardly in the middle. On paper, it should be the budget choice, but extended thinking modes can quietly multiply its token usage and erase some of the apparent savings. There are already reports of Sonnet runs ending up more expensive than Opus 4.6 once all that extra “internal thought” is billed.

For Australian companies, latency and local network paths also matter. Customer-facing chatbots in retail or education need fast responses, so lighter models or lower reasoning settings on GPT-5.4 can be better value than maxing out Opus. On the flip side, if your main use is monthly board packs or internal research, an extra second or two does not matter – but the per-token rate does.

Procurement teams often sanity-check these trade-offs against public comparisons such as GPT‑5.4 vs Claude pricing and capability reports and case studies like coding-focused GPT‑5 vs Claude Opus analyses, sometimes with help from partners like Lyfe AI to model token economics, set routing rules, and map them back to local cost centres.

https://www.digitalapplied.com/blog/gpt-5-4-vs-claude-4-6-value-analysis

5. AI assistants, tools, and workflow integration

Most businesses will not use GPT-5.4 or Claude 4.6 raw. You will experience them through assistants, copilots, and background automation. That is where their differences in tools and integration really show.

GPT-5.4 has a standout feature: built-in computer use, letting it read screenshots and issue mouse and keyboard inputs to operate software directly. It can read screenshots, move the mouse, type into fields, and navigate software much like a human operator. For an Australian accounting firm, that might mean an agent that logs into Xero, exports reports, updates a Google Sheet, and drafts client emails – all on its own. It is not magic, but it is a real shift from “chatbot” to “digital worker”.

Claude does not currently offer the same level of built-in computer control, but it is strong at tool use inside workflows where a separate integration layer handles the clicks. In practice, Claude works well as the “brain” behind orchestrators on AWS Bedrock, pulling the right API, drafting a response, and deciding which step comes next in a process.

On conversation continuity, both models now support million-token contexts at the top tier. That allows long-running project assistants – for example, a planning bot that tracks an entire construction project or a learning companion that remembers months of study history. GPT-5.4 may hold a small edge when you need to mix that memory with lots of tool calls and complex routing.

Personalisation is still early for both. Each platform supports fine-tuning on your own data and better prompt control, but the bigger question is: which ecosystem fits your stack? If your workplace runs on Microsoft 365, Power Platform, and Azure, GPT-5.4 will feel more “native”. If you are all-in on AWS, CloudWatch, and Bedrock, Claude will slot in more smoothly.

As assistants become more like colleagues than chat boxes, these integration details start to matter as much as the raw intelligence of the model. Many Australian teams now start with a secure, all-in-one AI assistant for everyday business tasks, then extend into deeper automation and model-orchestration projects once early wins are proven.

https://support.claude.com/en/articles/12138966-release-notes

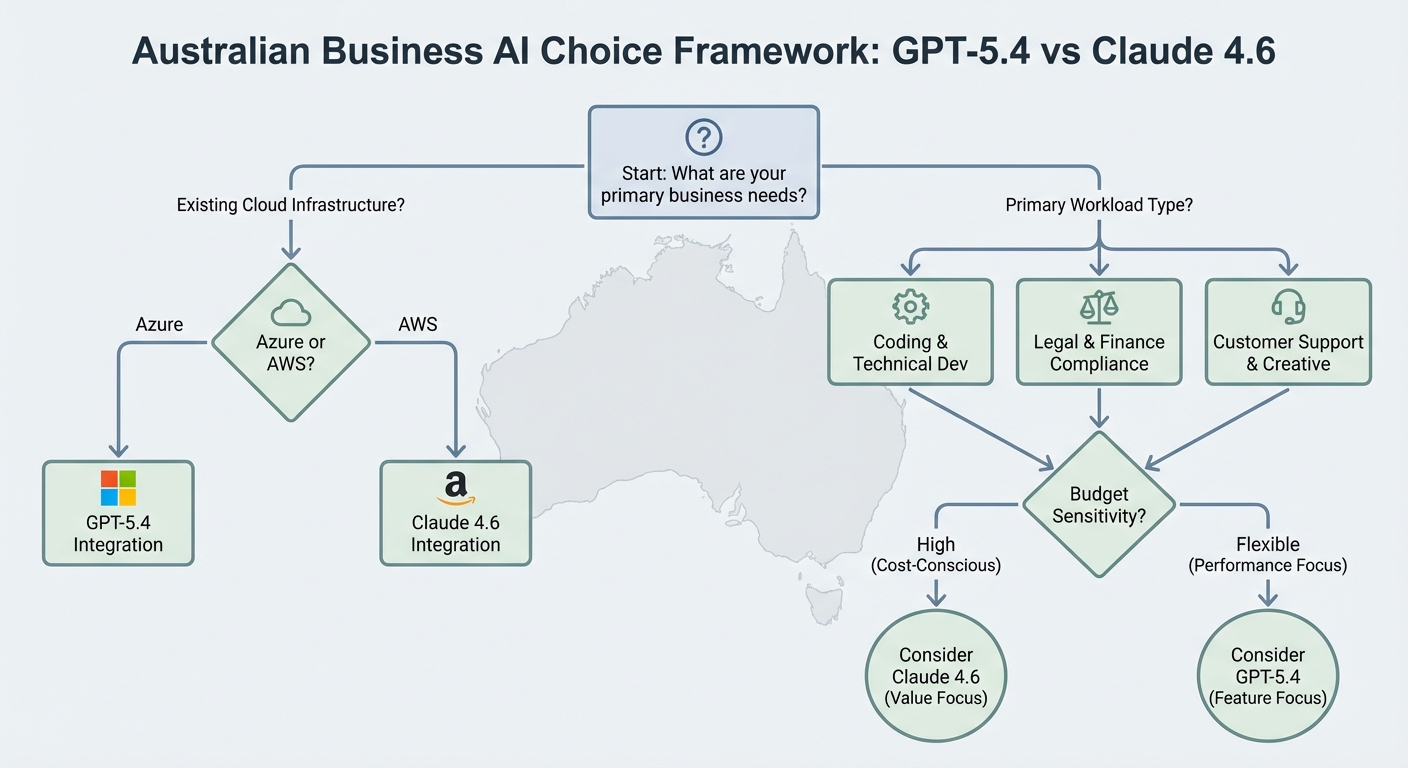

6. Practical decision guide for Australian teams

So which model offers the most value for you right now? It depends on your workloads, your risk appetite, and your existing stack. But we can sketch some clear patterns that match what we see across Australian teams.

GPT-5.4 is usually the better fit if your goals look like this:

-

You want autonomous workflows that actually press buttons in existing apps.

-

Your dev team is pushing hard on AI-assisted coding, test generation, and CI pipelines.

-

You are deep in the Microsoft ecosystem and want clean Azure integration.

-

Your workloads are many small, repeat interactions where speed and volume win.

Claude Opus 4.6, with Sonnet 4.6 as the mid-tier option, is usually the better fit if:

-

You work in law, finance, government, or health where a wrong answer can be costly.

-

Your main use cases are long-document analysis, research, or complex customer queries.

-

You want a safety-first posture that is easier to explain to regulators and boards.

-

You are already building on AWS and can tap into Bedrock directly.

For many organisations, the smartest path is a hybrid strategy. Route deep analysis, legal work, and “don’t get this wrong” tasks to Claude Opus. Send rapid coding, triage chat, bulk content, and tool-heavy workflows to GPT-5.4. Use routing logic based on prompt type, risk level, and expected token volume.

Before you commit, run a structured pilot. Pick three to five real workflows – for example, contract review, sales proposal drafting, support triage, and refactoring a key service. Implement both GPT-5.4 and Claude on those flows, measure accuracy, latency, token usage, and user satisfaction, and then decide. A week of careful testing will beat any vendor pitch deck.

Finally, budget time for change management. Swapping out your base model changes prompts, guardrails, and user habits. Plan training, update internal guidelines, and keep a backup model ready in case one platform has an outage or policy shift. In the end, resilience may be as important as picking a single “best” model.

To keep this grounded, some leaders also draw on real-world usage data, such as studies of how people actually use ChatGPT and Claude at work, then map that against local pilots and structured advisory support.

https://openai.com/index/introducing-gpt-5-4

Conclusion – choosing the right AI mix for your business

GPT-5.4 and Claude 4.6 are not simple rivals fighting for one crown. They are different tools built for different jobs. GPT-5.4 brings agentic power, strong coding, and tight Microsoft integration, at a premium price but with high efficiency for high-throughput work. Claude Opus 4.6 brings careful reasoning, safer tone, and long-context insight at a far lower per-token cost, which suits high-stakes analysis.

For most Australian organisations, the best value will come from combining them, not betting everything on one. Use GPT-5.4 where speed and automation matter most. Use Claude where accuracy, nuance, and explainability really count. Start with a focused pilot, measure real costs and outcomes, then scale what works.

If your team wants help designing that hybrid approach – from workflow mapping to routing logic and governance – now is the time to move. The gap between early adopters and everyone else is widening fast, and the companies that learn to use both platforms well will set the pace for the next few years. Many of those early adopters lean on professional AI consulting services plus clear terms and governance frameworks to keep experimentation controlled but fast.

https://www.digitalapplied.com/blog/gpt-5-4-vs-claude-4-6-value-analysis

© 2026 LYFE AI. All rights reserved.

Frequently Asked Questions

What is the main difference between GPT-5.4 and Claude 4.6 for business use?

GPT-5.4 is optimised for complex tool use, advanced coding and agent-style workflows where the AI can operate apps, process screenshots and follow tightly defined procedures. Claude 4.6 (Opus and Sonnet) focuses more on deep reasoning, safe responses and long‑document understanding, which is ideal for policy, legal and analytical work.

Which is better for Australian businesses, GPT-5.4 or Claude 4.6?

Neither is universally “better”; it depends on your use case and risk profile. GPT-5.4 usually offers more value for highly automated workflows, coding support and integrated tools, while Claude 4.6 often wins for safer long‑form analysis, internal policy work and tasks where you need very careful reasoning and tone control.

How do GPT-5.4 and Claude 4.6 compare on pricing and value for money?

Both vendors now offer tiered pricing based on model size, context window and usage volume, so value depends on how you use them, not just the per‑token rate. For many Australian businesses, GPT-5.4 can be more cost‑effective when you need automation and tool use to replace manual work, while Claude Sonnet 4.6 can be cheaper for high‑volume knowledge work like summarisation, research and policy review.

Which AI model is better for coding and software development, GPT-5.4 or Claude 4.6?

GPT-5.4 generally has the edge for coding, refactoring and multi‑step dev workflows because it is built around agentic behaviour and strong integration with developer tools. Claude 4.6 is still very capable for code explanation, documenting legacy systems and reasoning about large codebases, especially when you paste or connect big chunks of source code.

Is Claude 4.6 safer than GPT-5.4 for regulated industries in Australia?

Claude 4.6 is explicitly positioned around safety, refusal of risky content and careful handling of sensitive topics, which appeals to sectors like finance, legal and healthcare. GPT-5.4 also has strong safety layers, but it is tuned more towards powerful tool use and automation, so your compliance fit will depend on how you configure guardrails, logging and human‑in‑the‑loop checks.

Which model handles long documents better, GPT-5.4 or Claude 4.6?

Both handle very long contexts, with GPT-5.4 and Claude 4.6 each supporting up to around a million tokens in certain configurations. In practice, Claude 4.6 is often preferred for deep reading of contracts, policies and research reports, while GPT-5.4 is ideal when you want to read long documents and then immediately trigger tools, workflows or structured outputs from that analysis.

How do GPT-5.4 and Claude 4.6 integrate with existing business tools and workflows?

GPT-5.4 is tightly integrated into the OpenAI ecosystem and works well with tool calling, internal APIs and “AI agents” that can operate devices or browser sessions. Claude 4.6 integrates via the Anthropic API and partner platforms, and tends to be used inside chat-style knowledge assistants, document review tools and internal search rather than direct device control.

Which AI model should I choose for customer support automation in my Australian company?

If you want the AI to not only answer customers but also perform actions—like updating tickets, checking orders or triggering workflows—GPT-5.4 usually delivers more value because of its tool‑use orientation. If your main need is accurate, safe, well‑toned responses on complex policies or legal terms, Claude 4.6 can be a better fit and easier to keep aligned with your brand voice.

Can GPT-5.4 and Claude 4.6 be used together in the same business?

Yes, many organisations run a dual‑model strategy, using GPT-5.4 for automation, coding and operations, and Claude 4.6 for long‑form reasoning, analysis and sensitive content. This lets you route each task to the model that gives the best mix of accuracy, cost and risk management instead of forcing one model to do everything.

How can LYFE AI help my business choose between GPT-5.4 and Claude 4.6?

LYFE AI works with Australian businesses to audit your workflows, test both models on your real data and design a decision framework that balances cost, performance and risk. They can implement, integrate and manage GPT-5.4, Claude 4.6 or a combination of both, so you end up with a practical, production‑ready AI stack rather than just a proof‑of‑concept.