Multi-Model Fact-Checking for SEO by Lyfe Forge

Table of Contents

- Council of Five: Multi-Model Fact-Checking vs Multimodal AI

- Inside Lyfe Forge’s Council of Five Architecture For SEO

- Accuracy, ROI and Case Studies: What Multi-Model Fact-Checking Delivers

- From Stale To Standout: Multi-Model Fact-Checking For Australian SEO Teams

- Practical Tips: How To Start Using Multi-Model Fact-Checking Today

- Conclusion: Future-Proof Your SEO With a Council of Models

AI has made it cheap to churn out mountains of content. But in SEO, cheap is not the same as effective. When every competitor is using the same single model, you end up with look-alike articles that feel thin, dated, and sometimes just wrong.

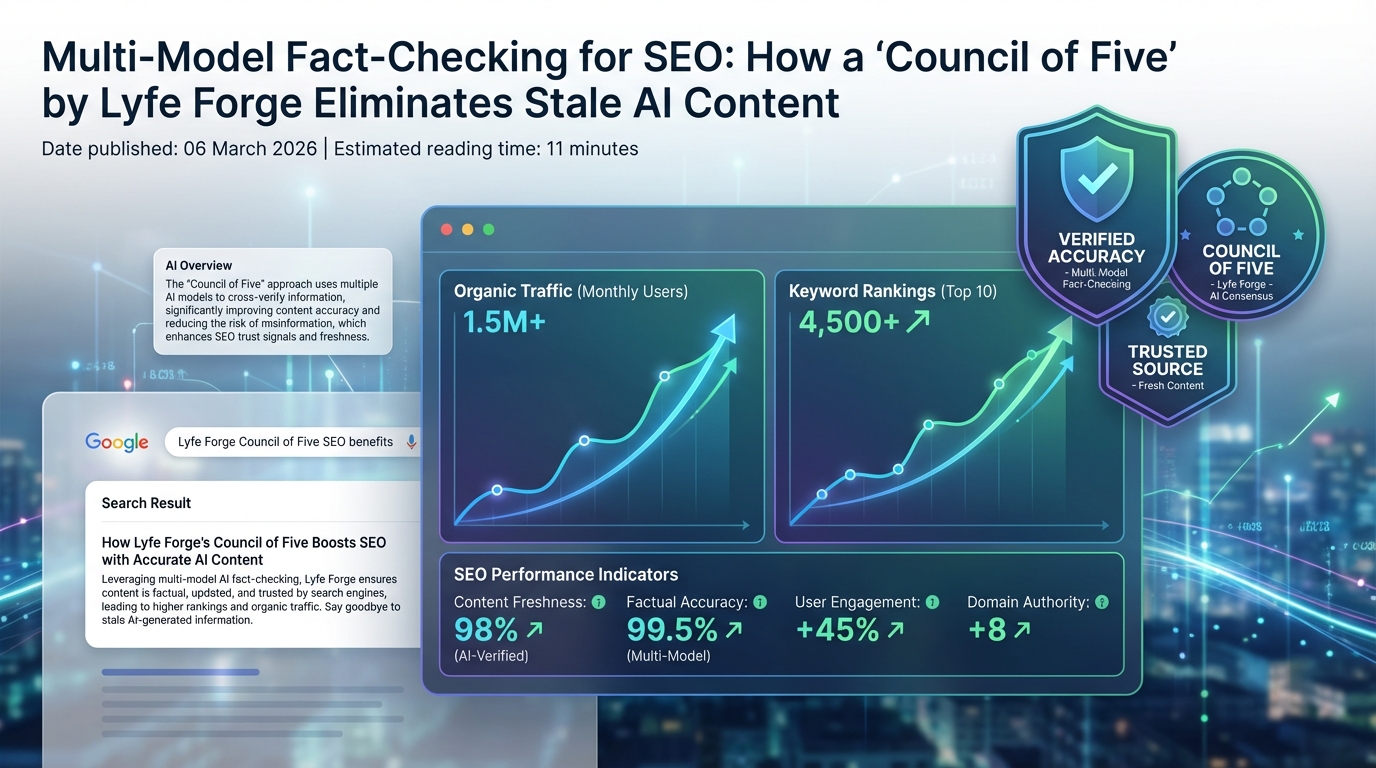

That is exactly where multi-model fact-checking for SEO comes in. Lyfe Forge’s Council of Five uses several independent AI systems to cross-check facts, spot stale data, and surface real sources before you hit publish. The goal is simple: keep your content accurate, current, and clearly trustworthy in a world where Google’s AI Overviews and search quality systems are pushing hard on authority and relevance.

In this guide, we will unpack what a Council of Five actually is, how it works under the hood, and how Australian marketers can use it to scale content without turning their brand into yet another AI clone.

Council of Five: Multi-Model Fact-Checking vs Multimodal AI

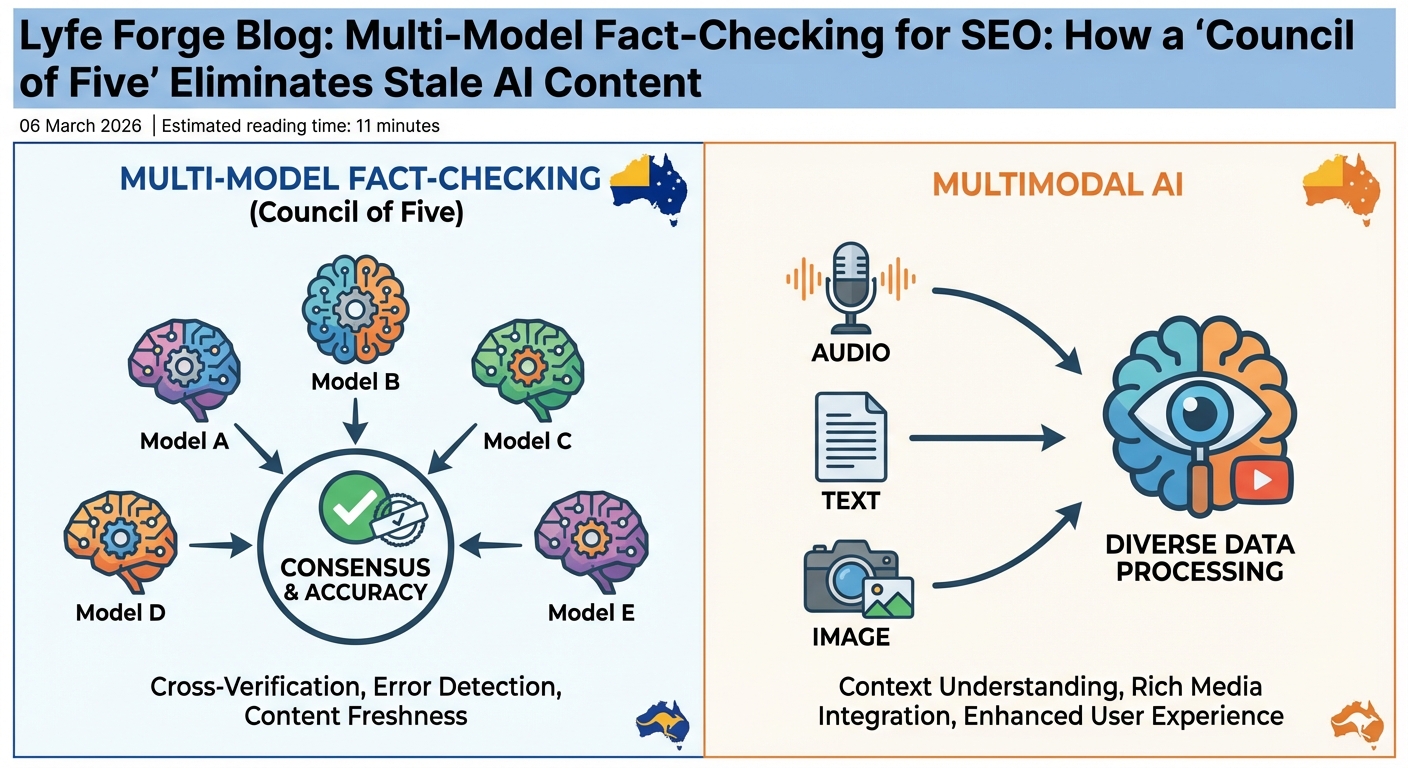

First, clear up the jargon. Multi-model fact-checking and multimodal AI sound almost the same, but they solve different problems for SEO. If you mix them up, you will design the wrong workflow and wonder why rankings stall.

Multi-model fact-checking means you use several different AI models to review the same claim or piece of content in parallel. Think of it as a panel of experts: one model might be great at reasoning, another at live web search, another at sourcing, another at social signals. You send the same question to all of them, compare answers, and then decide what to trust based on consensus and evidence.

Multimodal AI is about the type of data, not the number of models. A multimodal system can read text, look at images, listen to audio, even watch short clips, and then link them together to judge a claim. Recent research on multimodal fact-checking uses pipelines with a text evidence agent, an image analysis agent, and extra agents that build explanations and reasoning paths. These systems can, for example, check if a photo on a social post really matches the headline and description. https://arxiv.org/abs/2508.05097

For SEO teams in 2026, the sequence usually looks like this. Phase one: deploy multi-model fact-checking on your written content – blog posts, landing pages, product copy, support articles. This is where most of your organic traffic lives. Phase two: move into multimodal checks for charts, screenshots, and product photos, making sure the story your visuals tell aligns with the words on the page.

In this context, Lyfe Forge’s Council of Five is firmly a multi-model system. It is about who is doing the reasoning, not what type of file you upload. Each model brings its own training data, its own blind spots, and its own strengths to the table. The magic comes from how they are orchestrated and how disagreements are resolved before your content goes live.

https://wpaiwriter.com/model-ensemble-draft-fact-check-to-rewrite

Inside Lyfe Forge’s Council of Five Architecture For SEO

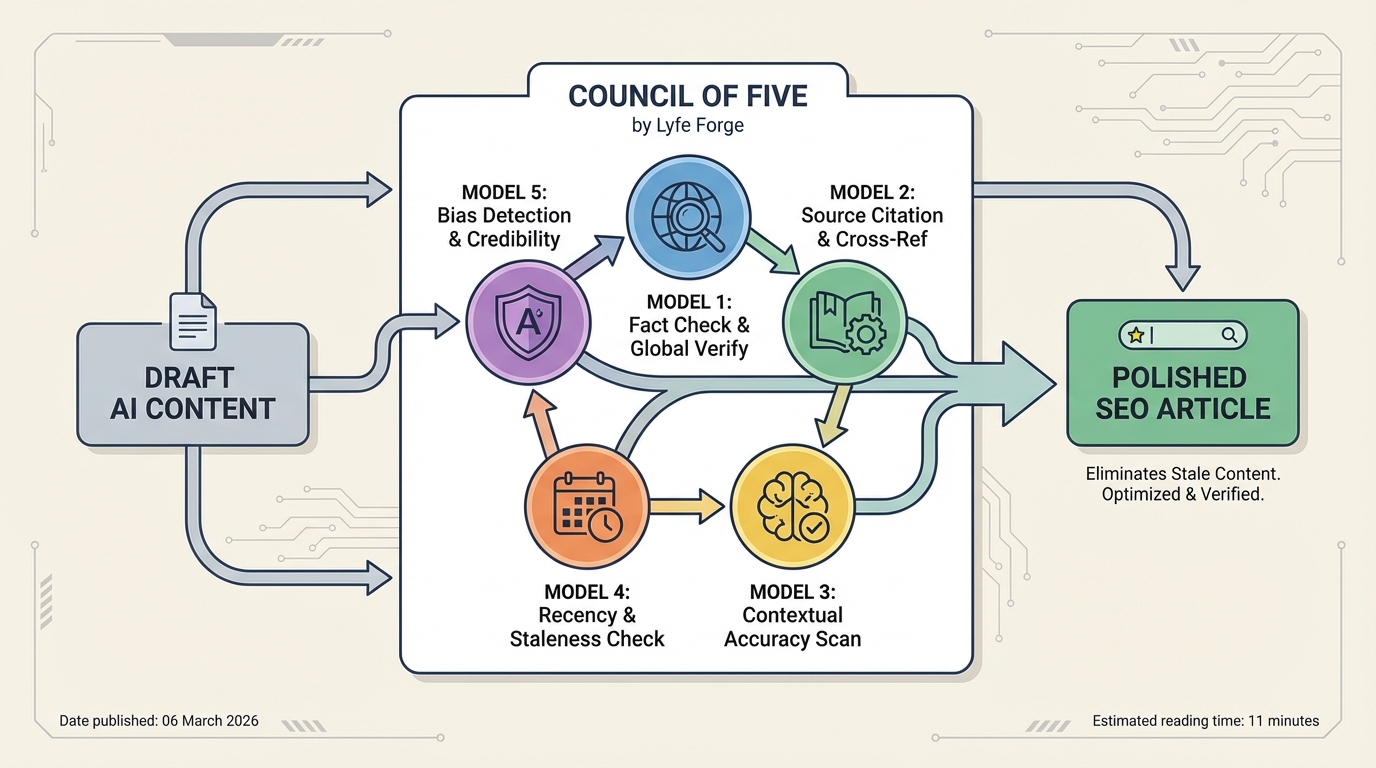

Lyfe Forge, an Australian AI-powered content platform, has baked this council approach into the core of its workflow. The Council of Five is not marketing fluff. It is a specific multi-model fact-checking design aimed at modern SEO needs.

The council currently combines five independent engines: OpenAI for deep reasoning and synthesis, Gemini for real-time Google data and news, Perplexity as a sourced research backbone, Grok for live X/Twitter trends, and Brave Search for an independent web index. Each runs its own retrieval and analysis path instead of sharing one narrow pool of information. This diversity is the point; you want different “brains”, not five copies of the same one. https://lyfeforge.com.au

Under the hood, Lyfe Forge uses parallel orchestration. When you start a new brief inside the Lyfe Forge platform, your topic, target audience, and angle are sent to all five models at once. They independently query the web, their own training data, and relevant sources. The system then builds a unified research brief, including citations, before it even drafts a sentence of copy.

Next, a fact verification engine compares claims in the draft against this multi-model research pool. If three models agree and two disagree, the engine does not just take a simple vote; it checks which models provided stronger evidence and fresher sources. In high-risk or ambiguous areas, the content is flagged for human review inside your workflow rather than quietly published.

This is different from a vanilla “AI writer” that pulls from one model and trusts whatever comes back. Lyfe Forge builds in an argument-style process where each model is effectively presenting its case, and the system (plus your team) acts as the judge. That structure lets you scale research, drafting, and fact-checking without giving up control of your brand voice.

https://www.sciencedirect.com/science/article/pii/S2590123025008291

Accuracy, ROI and Case Studies: What Multi-Model Fact-Checking Delivers

Multi-model fact-checking sounds nice in theory, but SEO teams need numbers. Does a council of models actually cut errors and pay for itself, or is it just another shiny tool on the stack?

Across ensemble-style systems, researchers see double-digit drops in factual mistakes compared with single-model setups. On structured fact-checking benchmarks, ensembles have cut error rates by 10 to 25 percent and pushed accuracy into the low 80s, approaching human fact-checker performance on some tasks. When models cross-check each other, they are less likely to repeat the same hallucination or get stuck in the same bias. https://experiment.com/projects/atjdlplziorzkcuarxdq/protocols/12262-genspark-ai-review-i-ditched-5-tools-for-this-here-s-my-verdict

Lyfe Forge reports that its Council of Five has delivered a 32.3x return on investment for some customers, based on about 35 hours saved each month from research, drafting, and fact verification for 10 SEO articles. Put simply, a single marketer in Melbourne can ship a month of content in a couple of focused sessions instead of burning nights and weekends. The platform’s AI-powered content engine plugs straight into WordPress, Wix, and raw HTML, so the research does not get stuck in docs that no one reads later. https://lyfeforge.com.au

Real-world stories make this clearer. One digital agency in Melbourne scaled from four to sixteen long-form posts per month without hiring additional writers. The council workflow handled fresh stats and cross-checking, while humans focused on strategy, tone, and client sign-off. A Sydney ecommerce SEO manager used the system to audit older guides, uncovering three key outdated metrics that were quietly hurting trust and conversions. After updates, engagement climbed and support tickets about “wrong info” dropped. https://gspark.coupons/genspark-vs-perplexity

There is also an underrated risk angle. In health, finance, or legal niches, a stray incorrect claim is not just embarrassing; it can create real legal exposure. By using a multi-model council plus a human-in-the-loop review, Australian brands can show they took reasonable technical steps to avoid publishing misleading claims, which may matter for regulators and platforms over the next few years.

From Stale To Standout: Multi-Model Fact-Checking For Australian SEO Teams

The Australian search landscape is shifting fast. With Google rolling out AI Overviews and more generative features, simply ranking in the blue links is no longer the whole game. You also want your brand to become a trusted source that other AI systems quote and surface.

That is where freshness and reliability come together. Generative AI made it easy to flood the web with content that looks polished yet hides subtle errors. In response, search systems are placing more weight on authority, clear sourcing, and differentiated insight instead of basic keyword coverage. A multi-model fact-checking layer lets your site adapt to new data without needing a full rewrite every time a stat changes. You can run scheduled audits of key pages, identify stale numbers, and refresh them based on the newest evidence the council finds. https://lyfeforge.com.au

For Australian businesses, this aligns tightly with Google’s E-E-A-T framework: Experience, Expertise, Authoritativeness, and Trustworthiness. A Council of Five setup can be your internal guardrail for E-E-A-T. It nudges writers to include real sources, warns when facts are weak, and helps your experts add lived experience on top of solid data rather than guessing from memory.

There are also broader governance gains. Just as big consulting firms use AI to audit financial data for anomalies, marketing leaders can use multi-model ensembles to audit content portfolios. You can scan for risky claims, broken references, or pages that now conflict with updated regulations or guidance in your industry. It is not only about ranking; it is about protecting your brand while still moving quickly.

Of course, none of this removes the need for human judgment. The sweet spot is a human-plus-AI workflow: Lyfe Forge does the heavy lift on research and cross-checking, while your team chooses angles, adds stories from the Australian market, and makes the final call on what aligns with your values.

https://www.sciencedirect.com/science/article/pii/S2590123025008291

Practical Tips: How To Start Using Multi-Model Fact-Checking Today

You do not need a full data science team to benefit from multi-model fact-checking. You can start small, then layer in more automation as your content engine grows.

A simple rollout path might look like this:

- Pick your use cases. Start with content that carries the most risk or impact: high-traffic blog posts, core landing pages, comparison pages, and anything in regulated niches.

- Adopt a council-based tool. Instead of juggling several separate AI tabs, use an integrated platform like Lyfe Forge that bakes the Council of Five into one workflow and pushes final content straight to your CMS.

- Define “must-check” claims. Build a short checklist for your team: any statistic, price, regulation, health statement, or quote must be run through the council before sign-off.

- Schedule content audits. Once a quarter, have the system re-scan your top 50 to 100 URLs, flagging stale or conflicting facts so you can update before traffic or trust drops.

- Keep humans in the loop. Assign an editor to review council disputes and edge cases. Use their decisions to refine prompts, policies, and your internal style guide.

Over time, this becomes a habit rather than a heavy project. Your writers learn to expect fact feedback from multiple models, your editors spend less time on tedious checks, and your SEO performance reflects that quiet but consistent quality upgrade.

Conclusion: Future-Proof Your SEO With a Council of Models

In a web full of fast, generic AI content, the brands that win will be the ones that prove they can be trusted. Multi-model fact-checking through a Council of Five gives you a concrete way to do that, day after day, without slowing your team to a crawl.

By combining diverse AI models, structured verification, and human editors, you can publish SEO content that stays fresh, earns citations from other AI systems, and supports your E-E-A-T story in the Australian market. If you are ready to shift from single-model guesswork to council-powered clarity, explore how Lyfe Forge’s AI-powered content platform can plug into your current stack and start cleaning up your content pipeline today.

Frequently Asked Questions

What is multi-model fact-checking in SEO?

Multi-model fact-checking is the process of sending the same claim or piece of content to several different AI models and comparing their answers. Instead of trusting a single model, Lyfe Forge’s Council of Five looks for consensus, supporting sources, and conflicting signals to decide what’s accurate and up to date for SEO content.

How does Lyfe Forge’s ‘Council of Five’ work for fact-checking content?

Lyfe Forge’s Council of Five runs your draft content through five independent AI systems, each with different strengths like web search, reasoning, sourcing, and trend analysis. The platform then cross-checks their responses, flags mismatches, surfaces live sources, and helps your writer or editor correct or enrich the content before publishing.

How does Lyfe Forge stop AI content from becoming stale or outdated?

Lyfe Forge connects multiple models to live, time-stamped data and compares their findings against your draft. When the Council of Five detects outdated stats, policies, pricing, or SERP realities, it flags those sections and suggests updated sources, helping you keep content aligned with the latest information and Australia’s evolving search landscape.

What’s the difference between multi-model fact-checking and multimodal AI?

Multi-model fact-checking uses several different language models to evaluate the same text-based claim and reach a more reliable consensus. Multimodal AI, by contrast, focuses on handling different kinds of data (text, images, audio, video) together; Lyfe Forge’s Council of Five is specifically about using multiple models, not multiple media types.

Why is multi-model fact-checking important for SEO in 2026?

As Google rolls out more AI Overviews and tightens quality systems, weak or wrong information is more likely to be ignored or demoted. Multi-model fact-checking gives your content stronger evidence, fresher data, and clearer authority signals, which helps it stand out from generic AI articles and stay competitive in 2026 search results.

How does Lyfe Forge help avoid duplicate or look-alike AI content?

Instead of generating a single, generic draft and publishing it, Lyfe Forge uses the Council of Five to critique, enrich, and differentiate your content. It highlights gaps, suggests unique angles, and pulls in diverse sources, so your final article doesn’t just echo what a single model or your competitors already wrote.

Can I use Lyfe Forge’s Council of Five with my existing content workflow?

Yes. You can plug Lyfe Forge into your current process by sending AI or human-written drafts through the Council of Five before they go live, either via web app or API. It works as a fact-checking and enhancement layer that sits between content creation and publication, rather than replacing your existing tools or CMS.

Is Lyfe Forge’s multi-model fact-checking suitable for Australian SEO specifically?

Lyfe Forge is built with Australian SEO in mind, including local search behaviour, regulations, and the 2026 search landscape. The Council of Five can prioritise Australian data, sources, and SERP conditions, making it well suited for local businesses, agencies, and national brands targeting Australian audiences.

How is Lyfe Forge better than just using one advanced AI model like ChatGPT for SEO content?

A single model can hallucinate, miss new updates, or overfit to common phrasing, which leads to generic and sometimes wrong content. Lyfe Forge’s Council of Five exposes disagreements between models, forces each claim to be checked against live evidence, and then guides you toward the most reliable, source-backed version of the content.

Does Lyfe Forge provide sources and citations for the facts it checks?

Yes. When the Council of Five evaluates a claim, it surfaces URLs, documents, and other evidence it relied on so editors can manually verify critical points. This makes it easier to add citations, outbound links, and proof elements that strengthen E‑E‑A‑T and user trust on your site.