Table of Contents

- GPT 5.5 vs Claude Opus 4.7 – Which Frontier Model Actually Fits Your Work?

- Core capabilities and benchmarks – where GPT 5.5 and Claude Opus 4.7 really differ

- Pricing, tokens and real-world cost dynamics

- Enterprise and AU context – governance, data and real use cases

- Choosing between GPT 5.5 and Claude Opus 4.7 – a practical decision framework

GPT 5.5 vs Claude Opus 4.7 – Which Frontier Model Actually Fits Your Work?

OpenAI’s GPT 5.5 and Anthropic’s Claude Opus 4.7 land in the same week, but they are not clones. Think of them as two elite players on different sides of the field. Both are powerful. Neither is perfect for every job.

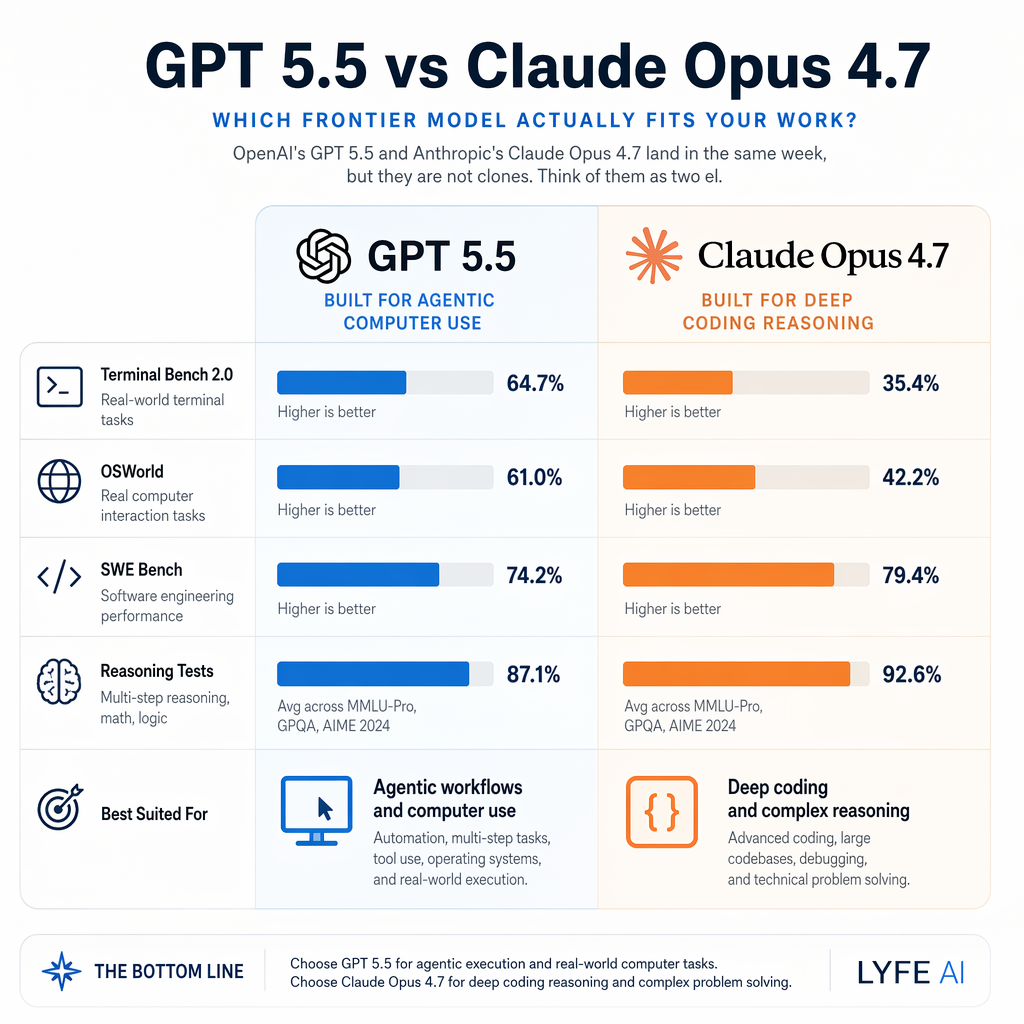

Claude Opus 4.7 leans toward deep coding work, long-horizon reasoning, and complex agent workflows. GPT 5.5 leans toward fast, tool-heavy research, browser-driven tasks, and broad ecosystem support. According to benchmarks, GPT 5.5 leads on According to recent benchmark runs, GPT 5.5 pulls ahead on agentic computer tasks like Terminal-Bench 2.0 and OSWorld, while Claude holds its edge on SWE-Bench Pro and tough reasoning tests such as Humanity’s Last Exam.[1][2]

For Australian teams, the question is simple: which model turns into more real output for your budget, stack, and risk profile?

[1] kingy.ai [2] llm-stats.com

Core capabilities and benchmarks – where GPT 5.5 and Claude Opus 4.7 really differ

Both models target “real work”, but they tilt in different directions. GPT 5.5 is pitched by OpenAI as a retrained agentic model built to drive multi-step workflows across tools, with strong performance in coding, research, and computer use. Claude Opus 4.7 is Anthropic’s most capable generally available model, tuned for advanced software engineering, long-running tasks, and careful instruction following, with high performance on vision and memory-heavy jobs. https://openai.com/index/introducing-gpt-5-5/ For teams that want to turn those raw capabilities into production-grade assistants, secure Australian AI assistants can sit on top of these models and handle everyday workflows without exposing sensitive data offshore.

On public benchmarks, the split is clear. GPT 5.5 scores about 82.7 percent on Terminal-Bench 2.0 and 78.7 percent on OSWorld-Verified, which both test how well an AI can operate a computer and command line on its own. Claude scores about 69.4 percent on Terminal-Bench 2.0 and 78 percent on OSWorld-Verified,[3] but pulls ahead on SWE-Bench Verified at 64.3 percent versus GPT 5.5’s 58.6 percent,[4] and on Humanity’s Last Exam for tool-free reasoning (46.9 percent versus 43.1 percent).[2][4] This means GPT 5.5 is usually better at execution-heavy agent work, while Opus 4.7 edges ahead on tough coding bugs and pure thinking problems. https://llm-stats.com/gpt-5-5-vs-claude-opus-4-7 In practice, combining those strengths with tailored AI implementation services is what turns benchmark wins into actual business outcomes.

Context windows are no longer a big differentiator. Both models support roughly one million tokens, so each can sit on top of large document sets, repo chunks, or multi-quarter project histories. Instead, how they use that context is the story: Claude tends to track instructions tightly across long prompts, where GPT 5.5 tends to flex more as an autonomous agent that decides what to do next – something that becomes very visible once you hook them into machine learning and predictive models that feed them structured signals.

One quiet but important difference: GPT 5.5 offers a “Thinking” mode that lets you adjust instructions while it is still working through a response. That gives you a kind of live steering wheel on complex reasoning tasks. Claude’s extended or adaptive thinking is powerful, but it does not yet expose that same mid-response control to users, so you need to be more deliberate up front. https://deploymentsafety.openai.com/gpt-5-5 For organisations that prefer opinionated guidance, an experienced partner like Lyfe AI can help decide where this control genuinely improves outcomes versus where it just adds noise.

Pricing, tokens and real-world cost dynamics

If you are picking a model for production work, raw capability is only half the game. Cost per task and failure rate across your typical jobs matter just as much. GPT 5.5’s API is priced at about 5 US dollars per million input tokens and 30 dollars per million output tokens. Claude Opus 4.7 keeps its headline 5 and 25 dollar pricing, but ships with a denser tokenizer that can produce up to 35 percent more tokens from the same text, which quietly raises effective costs. https://finout.io/blog/claude-opus-4-7-pricing

On paper, GPT 5.5 is more expensive per output token. However, OpenAI highlights that 5.5 completes jobs with fewer tokens than 5.4, sometimes up to 40 percent fewer, which can offset the higher unit price. Meanwhile Claude’s hidden token bump pushes some workloads over budget if you don’t tune prompts or shorten responses. So your “cheaper” model can end up costing more on the invoice if you don’t measure properly.

GPT 5.5’s 82.7 percent Terminal-Bench 2.0 score and 78.7 percent on OSWorld suggest it often finishes tool-heavy jobs faster, with fewer retries and less human babysitting. Claude’s lead on SWE-Bench Pro and Humanity’s Last Exam lines up with experiences from devs who use it to close hard GitHub issues or run deep research threads where one wrong assumption ruins the answer. In real terms, GPT 5.5 saves time when you want a digital intern to drive your browser and CLI, while Claude saves time when one clean, correct solution beats five messy drafts. https://www.marktechpost.com/2026/04/23/openai-releases-gpt-5-5-a-fully-retrained-agentic-model-that-scores-82-7-on-terminal-bench-2-0-and-84-9-on-gdpval/

For Australian businesses where every dollar must chase ROI, the practical move is to trace a few representative workflows – maybe a due diligence pack, a sprint’s worth of bug tickets, or a marketing campaign build – through both models and compare full-job cost and latency, not just unit prices. That analysis becomes much easier when you centralise usage through structured AI integration services rather than one-off experiments scattered across teams.

[3] AI Coding Benchmarks 2026: Every Major Eval Explained and Ranked [2] HLE w/o tools Benchmark 2026: 12 model averages | BenchLM.ai [4] llm-stats.com [4] Introducing GPT-5.5 | OpenAI

Enterprise and AU context – governance, data and real use cases

Performance aside, large organisations in Australia have to keep the regulators happy. Both vendors route their models through major clouds, and both are under pressure to align with the Australian Privacy Act and Online Safety Act. But the details differ, and they matter if you handle personal or regulated data.

Anthropic has leaned hard into government and enterprise partnerships. In April 2026, the Australian Government signed a memorandum of understanding with Anthropic focused on AI safety and economic tracking. This signals that Canberra expects Anthropic to play a long-term role in the local AI ecosystem, and it means Claude will keep landing in more “official” conversations about safe deployment and national capability. https://www.industry.gov.au/news/australian-government-has-signed-memorandum-understanding-mou-global-ai-innovator-anthropic

From an infrastructure angle, Claude Opus 4.7 is already available through services like Amazon Bedrock and Google Cloud’s Vertex AI, which both support strict access control, data residency options, and – in Bedrock’s case – a zero-operator-access design. That design stops operators at AWS and Anthropic from viewing your prompts or outputs directly, which is attractive for banks, health providers, and public-sector teams dealing with sensitive flows. GPT 5.5 is rolling out first through ChatGPT and Codex, with API access expanding as OpenAI hardens its own enterprise stack and data residency story. https://aws.amazon.com/blogs/machine-learning/introducing-anthropics-claude-opus-4-7-model-in-amazon-bedrock/ For local teams that want these capabilities wrapped in an Australian-hosted AI assistant, using a provider that understands regional compliance can be a shortcut through the policy maze.

Local regulators are also raising the bar. The OAIC’s guidance on the use of commercial AI tools stresses privacy-by-design, clear consent, and understanding where data is sent and stored. That does not endorse any model by name, but it does push Australian companies to favour vendors who can document their controls and give clear answers on training data, logging, and storage locations. Claude’s security-first marketing and OpenAI’s detailed system cards are both responses to this same pressure. https://www.oaic.gov.au/guidance-and-advice/guidance-on-privacy-and-the-use-of-commercially-available-ai-products It is also why offerings like Lyfe AI’s secure profile management and compliant checkout flows are becoming part of the conversation, not an afterthought.

If your legal team leads with risk memos, Claude via a locked-down cloud environment may comfort them. If your product team leads with speed and wants tight integration with existing GPT-heavy stacks, GPT 5.5 may win the internal politics, provided you configure data controls carefully.

Choosing between GPT 5.5 and Claude Opus 4.7 – a practical decision framework

Start from use case, not hype. For agentic workflows – think “AI ops engineer” driving terminals, clicking through web apps, running scripts – GPT 5.5’s stronger Terminal-Bench and OSWorld scores, along with its thinking mode, make it the natural default. For deep coding, analytical reports, and long-form reasoning where you care more about fidelity than improvisation, Claude Opus 4.7 is still a great bet.

Next, map your constraints. If your workloads live on AWS or Vertex AI, Claude slots in cleanly with existing identity and data-boundary settings. If your stack already leans on ChatGPT for support chat, content, and analytics, GPT 5.5 lets you extend that investment instead of adding a second vendor. For many Australian mid-market companies, the lowest-friction answer is “use both”: GPT 5.5 as your fast agent, Claude as your careful reviewer.

Finally, test on real work. Pick 10 to 20 representative tasks and run them through both models with simple, consistent prompts. Track: token use, full-job cost, error corrections, and human time saved. Most teams find that some categories – say financial modelling or security code review – cluster around one model, while marketing automation or research assistants cluster around the other. This pattern matters more than any global leaderboard. To accelerate that learning curve, structured AI courses and expert-led workshops can arm your staff with the skills to run these experiments safely.

If you want a shortcut, use this rule of thumb: choose GPT 5.5 when you need an AI that clicks, fetches, and executes across tools; choose Claude Opus 4.7 when you need an AI that thinks slowly, checks itself, and writes or reasons at depth. Then, as your AI maturity grows, layer them together so each model does the work it is best at – with a coordinating layer of orchestration and predictive modelling tying everything into your existing systems.

Frequently Asked Questions

What is the main difference between GPT 5.5 and Claude Opus 4.7?

GPT 5.5 is optimized as an agentic model for multi-step workflows, tool use, and browser-based research, while Claude Opus 4.7 is tuned for deep coding, long-horizon reasoning, and complex agent workflows. In practice, GPT 5.5 tends to excel at fast, tool-heavy tasks across a broad ecosystem, whereas Claude Opus 4.7 focuses on reliability and depth for complex software engineering and reasoning-heavy problems.

Which is better for coding, GPT 5.5 or Claude Opus 4.7?

Both are strong coders, but in different ways. Benchmark data shows Claude Opus 4.7 leading on hard software engineering tests like SWE-Bench Pro and high-stakes reasoning exams, suggesting it’s better for complex refactors, debugging, and long, multi-file codebases. GPT 5.5 performs very well on coding that’s embedded in larger tool workflows, such as writing scripts, running them via terminal, or iteratively testing in agentic environments.

Is GPT 5.5 or Claude Opus 4.7 better for research and internet browsing tasks?

GPT 5.5 generally has the edge for research and browser-driven tasks. OpenAI built it to use tools and the web efficiently, so it can search, summarize, and cross-check sources across multiple steps. Claude Opus 4.7 can still support research, but it shines more when the task requires deep reasoning over long documents you provide rather than heavy live web interaction.

How do GPT 5.5 and Claude Opus 4.7 compare on benchmarks like Terminal-Bench and SWE-Bench?

Recent public benchmarks show GPT 5.5 pulling ahead on agentic computer-use tasks such as Terminal-Bench 2.0 and OSWorld, where the model must operate tools and systems step by step. Claude Opus 4.7, however, keeps a lead on SWE-Bench Pro and demanding reasoning tests like Humanity’s Last Exam, indicating stronger performance on complex software engineering and reasoning under constraints.

Which model should Australian businesses choose: GPT 5.5 or Claude Opus 4.7?

Australian teams should choose based on workload and risk profile rather than headline scores. If your use cases are heavy on browser automation, quick research, and integrating with a broad ecosystem of tools, GPT 5.5 is usually a better fit. If you need stable, long-running tasks, deep coding help, or careful instruction following on complex workflows, Claude Opus 4.7 will often convert more of your spend into reliable output.

Is Claude Opus 4.7 safer or more reliable than GPT 5.5 for sensitive workflows?

Claude models are known for conservative, careful instruction following and are often preferred where safety, compliance, and reduced hallucinations matter. GPT 5.5 is powerful and flexible, especially with tools, but may require tighter guardrails and monitoring when used in production. For sensitive or high-risk workflows, many teams pilot Claude Opus 4.7 first, then selectively add GPT 5.5 where its tool use gives clear advantages.

Can I use both GPT 5.5 and Claude Opus 4.7 together in the same AI stack?

Yes, many teams now run a dual-model strategy instead of picking just one. You can route tool-heavy, browser or terminal-centric tasks to GPT 5.5, while sending deep reasoning, complex coding, and long-context analysis to Claude Opus 4.7. Platforms like LYFE AI help orchestrate this routing automatically so you maximise strengths of each model without rebuilding your whole stack.

How does LYFE AI help me choose between GPT 5.5 and Claude Opus 4.7?

LYFE AI evaluates your actual workloads—coding, research, support, internal ops—and maps them to where GPT 5.5 or Claude Opus 4.7 performs best. We benchmark models against your real tasks, factor in budget, risk tolerance, and existing tools, then design a routing strategy so each job goes to the model that gives the best output-per-dollar. This avoids overpaying for a single ‘frontier’ model where a targeted mix works better.

Do GPT 5.5 and Claude Opus 4.7 handle long documents and memory the same way?

Claude Opus 4.7 is specifically tuned for long-horizon reasoning and memory-heavy jobs, making it strong for large documents, multi-step plans, and long-running agent workflows. GPT 5.5 can also work with long contexts, but its standout advantage is orchestrating tools over multiple steps rather than just holding very long static context. For document-heavy knowledge work, Claude often has the edge; for interactive tool sessions, GPT 5.5 may win.

How do I test GPT 5.5 vs Claude Opus 4.7 on my own tasks before committing?

The most reliable approach is to run side-by-side trials on a small but representative set of your real jobs—sample tickets, codebases, research tasks, or internal processes. LYFE AI can set up controlled experiments where both models tackle the same prompts, then compare quality, speed, tool performance, and estimated cost so you can make a data-backed choice instead of relying on generic benchmarks.