Table of Contents

- What The OpenAI Privacy Filter Actually Does

- How The Privacy Filter Works Under The Hood

- Typical Ways People Use The OpenAI Privacy Filter

- Strengths, Limits, And How To Compare Alternatives

What The OpenAI Privacy Filter Actually Does

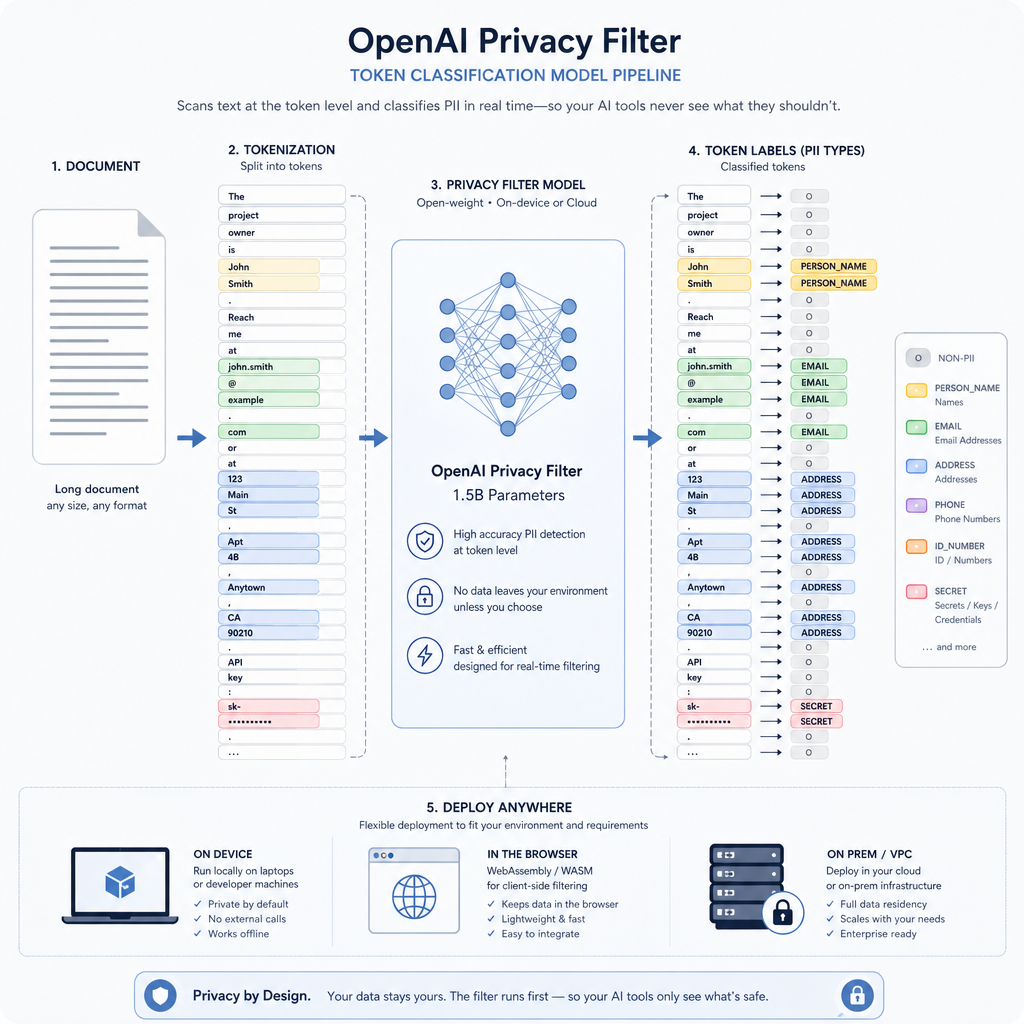

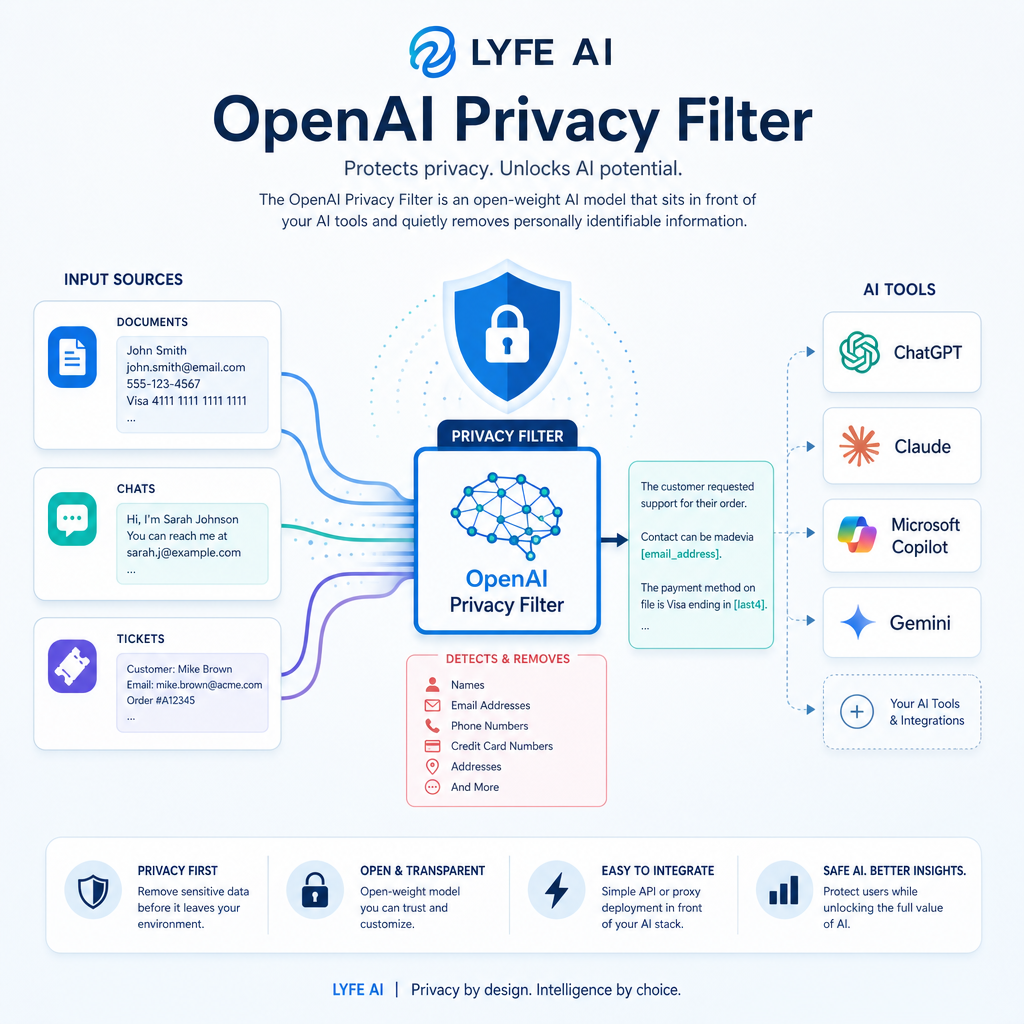

The OpenAI Privacy Filter is an open-weight AI model that sits in front of your AI tools and quietly removes personally identifiable information from text before anything is logged, shared, or sent to another system. Instead of relying on rigid regular expressions, it uses context-aware token classification to decide whether each fragment of text is sensitive or safe to keep. This lets the model handle unstructured inputs such as chat conversations, help desk tickets, or long-form documents without blindly masking every proper noun it sees.

At its core, the Privacy Filter labels tokens into at least eight categories of PII. These cover names, addresses, emails, phone numbers, URLs, dates, account numbers, and secrets like API keys or passwords. Once those spans are detected, a downstream policy can fully mask them, partially redact them, or replace them with pseudonymous identifiers. This means you can still analyse behaviour without exposing identities. Because it is released under the Apache 2.0 licence and can be deployed within an organisation’s own infrastructure, teams can configure privacy‑preserving AI pipelines that are designed so raw sensitive data does not need to leave their environment or be transmitted to an external provider. According to OpenAI’s release notes and the accompanying OpenAI model card, this local, context-aware approach aims to support privacy by design in both experimental and production settings. It also fits naturally alongside secure Australian AI assistants for everyday tasks that must keep sensitive prompts and responses onshore.

How The Privacy Filter Works Under The Hood

Underneath the simple interface, the OpenAI Privacy Filter is a compact bidirectional token‑classification model with 1.5 billion parameters.[1 – 4] During inference, the full set of parameters participates in computation rather than a small fixed subset of 50 million. This architecture is designed to interoperate with modern foundation models that support context windows of up to 128,000 tokens.[1 – 3] A full contract, codebase file, or multi-thread email chain can be scanned in one go instead of being chopped into smaller chunks. Because the model runs efficiently on laptops, browsers, and on-prem servers, organisations do not need to send unredacted content to the cloud just to benefit from modern PII detection. This is especially true when they are already running specialised AI services within tightly controlled environments.

Technically, the model was adapted from an autoregressive checkpoint and retrained to perform token-level classification for eight distinct PII labels plus a non-private class. During inference, it applies constrained Viterbi decoding over the predicted labels to join neighbouring tokens into coherent spans. This reduces noisy, fragmented masking that would otherwise break readability. This decoding strategy helps the system use context to decide whether a phone number belongs to a private individual or a public business, whether a date identifies a specific person, and how to treat URLs or account numbers embedded in logs. The resulting spans can then be handed off to a policy layer that implements the level of redaction your workflow or regulatory environment requires. This approach is described in the official documentation and explored further in practitioner-oriented breakdowns such as the OpenAI GPT 5.5 guide on what’s new and why it matters when you’re designing end‑to‑end architectures.

Typical Ways People Use The OpenAI Privacy Filter

Most teams use the Privacy Filter as a preprocessing step that runs before any external AI or analytics service receives text. In a common pattern, incoming data such as chat transcripts, customer support tickets, or uploaded documents flows first through the filter running on a local server or even a developer’s laptop. The model identifies and masks PII, then forwards only the scrubbed version to cloud-based large language models like ChatGPT, search indexes, or logging platforms. This significantly reduces the risk created when users paste sensitive content into AI tools, because direct identifiers are removed at the source rather than being managed downstream through policy alone. This pattern mirrors how modern AI customer support transformations are built to protect end‑user data by default.

Another frequent use is in data preparation for model training or fine-tuning. Organisations that want to leverage existing text corpora but must comply with privacy obligations can apply the filter in batch mode to produce a redacted dataset that is safer to store, share, and reuse across teams. Because the model is open-weight and Apache-licensed, engineering groups can integrate it into custom ETL pipelines or adapt it for niche domains such as healthcare, finance, or education. Guidance on deploying the filter at scale and integrating with Python or JavaScript applications is available through the Hugging Face model page and the accompanying GitHub repository. Both resources provide practical examples of streaming large inputs and configuring masking policies, much like the hands‑on resources that accompany machine learning and predictive models used in production analytics pipelines.

Strengths, Limits, And How To Compare Alternatives

The Privacy Filter stands out because it combines contextual understanding with local execution. Unlike rule-driven tools that can miss cleverly formatted identifiers or over-mask generic phrases, this model interprets how information is used and whether it is truly identifying. For Australian organisations, this context sensitivity is especially important when dealing with mixed-format records that combine free text, partial addresses, and historical dates that might reveal someone’s identity when combined. However, OpenAI is explicit that the filter is a technical control rather than a full compliance framework. It must be paired with broader governance measures such as access controls, retention rules, and incident response plans aligned with the Privacy Act 1988 and the Notifiable Data Breaches scheme. This is where partnering with providers like Lyfe AI, which focuses on secure, innovative AI solutions, can help connect model-level controls with organisational policy.

When evaluating alternatives, it helps to compare both detection quality and deployment model. Local anonymisation tools and open-source PII maskers can be attractive for simple workloads but may rely heavily on regular expressions and offer limited multi-language support. Enterprise data privacy platforms provide more governance features but are often proprietary black boxes. In contrast, OpenAI’s filter offers open weights, transparent documentation, and a modern language model backbone. This allows teams to inspect behaviour and adapt it to their needs. Industry commentators have noted that this blend of open access, efficiency, and privacy-centric design marks a shift in how AI vendors are supporting safer data use, especially as boards and regulators pay closer attention to AI-driven cyber risks. An overview of this broader risk landscape and the role of technical mitigations can be found in recent analyses such as the Harvard Business Review’s coverage of AI reshaping cyber risk, alongside more technical comparisons like GPT 5.5 vs Claude Opus 4.7 for real work and modality‑specific evaluations such as GPT Image 2 vs 1.5 and 1 on what really changed. Together, these resources show how privacy tooling must keep pace with rapid capability shifts in AI assistants, including secure Australian AI assistants configured for individual profiles and production-ready deployments handling everyday tasks.

[1] arxiv.org [2] elvex.com [3] codingscape.com [4] openai.com

Frequently Asked Questions

What is the OpenAI privacy filter and how does it work?

The OpenAI Privacy Filter is an open-weight AI model that detects and removes personally identifiable information (PII) from text before it’s logged, shared, or sent to another system. It uses context-aware token classification rather than simple pattern matching, so it can understand whether a name, number, or string is actually sensitive in context. This makes it suitable for unstructured inputs like chats, emails, tickets, or documents. It can be deployed inside your own infrastructure so raw sensitive data doesn’t have to leave your environment.

What types of personal data does the OpenAI privacy filter remove?

The OpenAI Privacy Filter is designed to detect at least eight categories of PII, including names, physical addresses, email addresses, phone numbers, URLs, dates, account or ID numbers, and secrets like passwords or API keys. Once detected, you can configure different policies for each type, such as fully masking, partially redacting, or pseudonymising them. This lets you safely analyse behaviour and content without exposing real identities. It’s especially useful for sectors like healthcare, finance, and customer support where PII appears in free-text fields.

How do companies actually use the OpenAI privacy filter in practice?

Companies typically place the Privacy Filter as a pre-processing layer in front of their AI tools, chatbots, or analytics pipelines. Incoming text (for example, from support tickets, forms, or chat prompts) is scanned and redacted locally before being sent to large language models or external APIs. They then use the filtered outputs for tasks like summarisation, classification, or assistant responses. LYFE AI, for example, can integrate the filter into secure Australian AI assistants so sensitive prompts and responses stay onshore and compliant.

Is the OpenAI privacy filter better than using regular expressions for PII detection?

In most real-world use cases, the Privacy Filter is more accurate and flexible than regex-only approaches because it uses context-aware AI instead of fixed patterns. Regular expressions struggle with unstructured data, edge cases, and international formats, and they often over-mask or miss important items. The Privacy Filter classifies each token in context, deciding if something that looks like a name, date, or number is actually sensitive in that sentence. You can still combine it with regex rules, but the model significantly reduces manual rule maintenance.

Can I run the OpenAI privacy filter on-premise or in my own cloud?

Yes. The OpenAI Privacy Filter is released under the Apache 2.0 licence and can be deployed within your own infrastructure, including on-prem servers, private cloud, or even laptops and browsers. That means unredacted content can stay entirely inside your controlled environment while only redacted text is passed to other systems. LYFE AI can help Australian organisations design and host these privacy-preserving AI pipelines locally to meet data residency and compliance requirements.

How do I integrate the OpenAI privacy filter into my existing AI workflows?

You typically integrate the Privacy Filter as a middleware step before any calls to LLMs, search, logging, or analytics tools. Text from users, documents, or logs is sent to the filter first, which returns a redacted version plus labels indicating what was removed. That filtered text is what you then feed into downstream models or storage systems. If you need help architecting this, LYFE AI offers consulting and implementation services to embed the filter into chatbots, internal tools, and document pipelines.

Does the OpenAI privacy filter affect the accuracy of AI models that use the redacted text?

There is some trade-off, but the filter is designed to preserve as much useful context as possible while only masking sensitive spans. Because it can pseudonymise entities instead of just blanking them out, downstream models can still understand relationships and behaviour patterns (e.g., User A vs User B) without seeing real identities. For many tasks like summarisation, classification, or intent detection, performance remains strong while privacy risk is greatly reduced. LYFE AI can help tune redaction policies so your specific use case balances privacy and model performance.

Is the OpenAI privacy filter suitable for Australian privacy laws like the Privacy Act and data residency requirements?

Yes, it’s designed to support privacy-by-design approaches that align with regulations like the Australian Privacy Act and various industry codes. Because the model can run inside Australian data centres or on-prem, you can keep raw PII onshore and avoid sending unredacted data overseas. Pairing the Privacy Filter with LYFE AI’s secure Australian AI assistants further helps meet data residency, consent, and minimisation obligations. You should still obtain legal advice, but technically it provides strong building blocks for compliant AI workflows.

Can the OpenAI privacy filter handle long documents and email chains?

The Privacy Filter is built to work with modern models that support long context windows, up to around 128,000 tokens. This means you can scan full contracts, large code files, or long multi-threaded email chains in a single pass instead of chunking them into smaller pieces. That reduces the risk of missing context-dependent PII or inconsistencies between chunks. It’s particularly useful for legal, compliance, and enterprise document-processing workflows that LYFE AI can help automate securely.

What’s the difference between the OpenAI privacy filter and just using OpenAI’s own APIs with data controls?

Using only OpenAI’s APIs with data controls still means your raw input text is sent to a third-party service, even if it’s not used for training. The Privacy Filter lets you remove or pseudonymise PII locally before any external call is made, significantly reducing exposure risk. It also works with other vendors and open-source models, acting as a vendor-neutral privacy layer. LYFE AI can design architectures where the Privacy Filter protects all your AI tools, not just a single API provider.