Table of Contents

- OpenAI GPT 5.5: What’s New And Why It Matters

- Key Capability Upgrades: Coding, Tool Use, Research, Reasoning And Cost

- GPT 5.5 As A Step Toward Agentic AI And The “Super App” Vision

- Efficiency, Token Usage And Cost-Performance In GPT 5.5

- Strategic Considerations For Australian Businesses Evaluating GPT 5.5

- Conclusion: How To Decide If GPT 5.5 Is Right For You

OpenAI GPT 5.5: What’s New And Why It Matters

OpenAI’s newly released GPT‑5.5 is the latest step in large language models becoming more practical, agentic day‑to‑day work partners – especially for coding, research, and other knowledge work – rather than just smart chatbots.[1][2][3] Built on top of the GPT‑5 family, including models like GPT‑5.2, it focuses heavily on three areas: more reliable reasoning and execution on complex, multi‑step tasks; deeper, more flexible integration with tools, search, and code execution; and much stronger, token‑efficient cost‑performance for serious workloads like coding, research, and content creation.[4][5][6] For Australian businesses, developers, and tech-savvy teams, this means AI that can not only help think through a problem but actually carry out more of the process – from reading documents to suggesting fixes in code – while keeping latency and costs in check. GPT 5.5 is designed to power secure Australian AI assistants and agents that feel more like a capable colleague than a simple autocomplete engine.

According to OpenAI’s latest artificial intelligence model coverage and the Models documentation for the OpenAI API, GPT 5.5 brings major gains in coding and computer use, deeper research and reasoning abilities, and notable token efficiency improvements that allow it to achieve better results with fewer tokens than GPT-5.4.

[1] Introducing GPT‑5 for developers | OpenAI [2] openai.com [3] clarifai.com

Key Capability Upgrades: Coding, Tool Use, Research, Reasoning And Cost

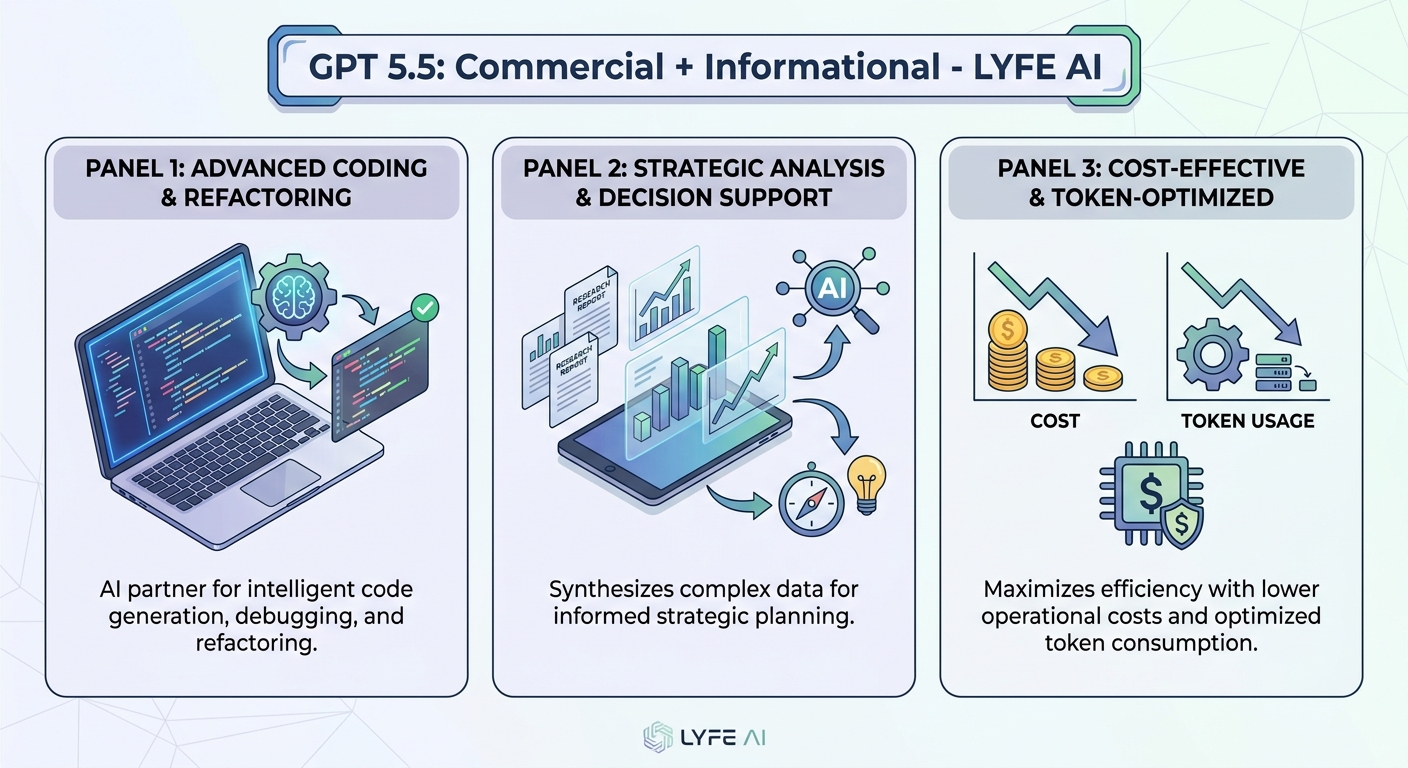

GPT 5.5’s most important upgrades cluster around three themes: coding and computer use, research and reasoning, and efficiency. For coding and computer use, the model is significantly better at generating correct code, refactoring existing codebases, and operating as a kind of “agent” that can plan and execute multi-step actions within software tools. In practice, this might look like allowing an AI assistant to navigate a project management interface, update issues, and push code changes with less back-and-forth guidance from a human developer, much like the AI-powered customer support transformations already emerging in modern service environments.

In research and reasoning, GPT 5.5 is described as much stronger at decomposing messy, real-world tasks into smaller parts, then producing coherent, long-form analysis. That includes market research, legal and policy analysis, and strategic planning tasks where information is fragmented and ambiguous. The model can better maintain a line of reasoning over many steps, especially when paired with tools such as code interpreters and web search, which help anchor its outputs in data and executable code rather than pure text prediction, a pattern echoed in enterprise deployments of GPT‑5.2 in Microsoft Foundry.

Efficiency is the third pillar. GPT 5.5 is characterised as “smarter for fewer tokens” compared to GPT-5.4, meaning it often needs shorter prompts and can respond with more concise, on-target answers. For API users and platform builders, this implies improved cost-performance: even if the per-token price is higher, the total tokens consumed per useful outcome can be lower, reducing effective cost per task and boosting throughput as the system processes fewer tokens for the same job. Because GPT 5.5 is aimed at “intelligence-bottlenecked” tasks – where human time and attention are the constraints – the cost-performance story is also about how much useful work one API call can achieve, particularly for workloads in coding, research, and knowledge-intensive services, the kinds of scenarios where Chat GPT‑5.5 model overviews emphasise efficiency gains.

GPT 5.5 As A Step Toward Agentic AI And The “Super App” Vision

Beyond raw capabilities, GPT 5.5 is framed as a foundational piece in OpenAI’s move toward what some observers call an AI “super app” – a unified, multi-purpose environment where chat, coding, browsing, document work, and tool use blend into one agentic experience. Instead of treating each task in isolation, the idea is to have AI assistants that can run end-to-end workflows: drafting reports, pulling data from online sources, updating spreadsheets, coordinating emails, and even interacting with other software tools, all while keeping context across steps, similar in spirit to how specialised AI services are woven into everyday business operations.

GPT 5.5’s advances in handling complex, multi-step tasks are especially important here. The model is built to better understand “messy” user requests, where the goal might not be fully specified at the start. It can plan sub-tasks, call external tools when needed, check its own work, and adapt to feedback mid-stream. In the context of a “super app,” that means a user could simply describe a high-level outcome – such as preparing a board pack for an Australian startup’s quarterly review – and the AI could coordinate data gathering, slide creation, and written analysis with less micromanagement.

This agentic orientation also shapes how GPT 5.5 will likely be used in enterprise environments. Rather than being limited to answering questions, it can serve as the engine behind AI copilots inside CRM platforms, document management systems, and research dashboards. In those settings, its improved tool use and reasoning help it act more like a junior analyst or developer, capable of taking on real pieces of work. As OpenAI and partners push toward richer, integrated applications, GPT 5.5 stands out not just as a smarter chatbot, but as a step toward AI systems that operate across the full digital workspace on behalf of users, a direction aligned with the Introducing GPT‑5 roadmap.

Efficiency, Token Usage And Cost-Performance In GPT 5.5

One of the more quietly transformative aspects of GPT 5.5 is its token efficiency. Tokens are the basic units of text (or data) that models process, and API pricing is usually based on how many tokens you send in and receive out. “GPT 5.5 is designed to deliver comparable or better performance than GPT-5.4 on many benchmarks while often achieving those results with fewer tokens for similar tasks.[7][8]” That includes needing shorter prompts from users and generating more concise, accurate completions, which is especially appealing when deploying machine learning and predictive models at scale across an organisation.

For developers and businesses, this has two main implications. First, overall costs for a given workflow can drop even if per-token pricing is higher, because the model simply consumes fewer tokens to get to the desired outcome. Second, latency and throughput can improve, since the system spends less time processing long prompts and sprawling outputs. In high-traffic or enterprise settings, shaving off tokens at scale can translate directly into a smoother, more responsive user experience, especially for complex agentic workflows that might otherwise require many back-and-forth calls.

[1] Introducing GPT-5.5 | OpenAI [2] youtube.com [3] nvidia.com

Strategic Considerations For Australian Businesses Evaluating GPT 5.5

For Australian organisations, deciding whether to adopt GPT 5.5 will come down to matching its strengths to specific, high-value problems. The model is particularly well suited to scenarios where tasks are complex, multi-step, and currently constrained by human expert time: codebase maintenance, in-depth research, policy and legal analysis, or multi-document summarisation. In those areas, its stronger reasoning and agentic capabilities can unlock meaningful productivity gains, especially when integrated into existing tools like IDEs, document editors, and internal dashboards, or into domain-specific AI assistants tailored to local workflows.

At the same time, buyers need to consider infrastructure, governance, and compliance. GPT 5.5 is a cloud-hosted model, so critical workflows will depend on stable internet access and robust API integration. Safety and guardrails are an explicit focus in the system’s design, with OpenAI emphasising stricter classifiers for cyber risk and broad evaluation across safety frameworks, but organisations must still build their own usage policies, audit processes, and fallback plans for model errors or outages, particularly in regulated sectors. Understanding how these considerations compare with other roadmap options, such as those outlined in ChatGpt 5.5 Vs 6 – Expected Features & Roadmap 2026, can also shape long-term planning.

Cost is another key dimension. While OpenAI positions GPT 5.5 as token-efficient compared with GPT-5.4, pricing for API access will still reflect its status as a frontier model, so teams should start by identifying “intelligence bottlenecks” where even modest speed-ups create outsized value. Early experimentation – for example, piloting GPT 5.5 on a narrow set of coding tasks or research workflows – can help refine ROI models before wider rollout. For many Australian businesses, the strategic question is less “Can we afford GPT 5.5?” and more “Where would its agentic capabilities most transform our operations, and how do we adopt it safely and deliberately?”, ideally in partnership with specialist providers experienced in secure, innovative AI solutions.

[7] Introducing GPT-5.5 | OpenAI [8] OpenAI releases GPT-5.5 with improved coding and research capabilities

Conclusion: How To Decide If GPT 5.5 Is Right For You

GPT 5.5 represents a clear evolution in OpenAI’s model line, shifting the focus from simple Q&A toward deep reasoning, tool-using agents geared for real-world work. Its upgrades in coding support, research capability, and token efficiency make it a strong candidate for complex, high-value workflows where human expertise is scarce and time-consuming. For Australian teams, the decision to adopt should start with a focused assessment: which current processes are constrained by cognitive load rather than simple automation limits, and where could a more autonomous AI assistant provide the biggest upside without compromising safety or compliance? Engaging with local AI instructors and experts can make that assessment far more grounded.

If your organisation is already experimenting with GPT-5 or GPT-5.4, GPT 5.5 offers a natural upgrade path toward richer, agentic applications. With thoughtful pilots, clear governance, and careful cost modelling, it can move from a promising technology to a core part of how work gets done, particularly when embedded into structured AI training programs and tailored assistant profiles that streamline adoption, right through to practical implementation and rollout.

Frequently Asked Questions

What is OpenAI GPT 5.5 and how is it different from earlier GPT models?

GPT 5.5 is OpenAI’s latest large language model in the GPT‑5 family, designed to act more like a capable digital coworker than a simple chatbot. Compared to earlier models like GPT‑5.2 or GPT‑5.4, it offers stronger reasoning on multi‑step tasks, better tool and code execution integration, and improved token efficiency so you get more done at lower cost.

What’s new in GPT 5.5 compared to GPT 5.4?

GPT 5.5 introduces major upgrades in coding accuracy, multi‑step task execution, and research‑grade reasoning while using fewer tokens to reach the same or better quality. It’s also more “agentic,” meaning it can plan and carry out sequences of actions inside tools, search, and code environments with less human micromanagement than GPT‑5.4.

How good is GPT 5.5 for coding and software development?

GPT 5.5 is significantly stronger at generating correct code, refactoring existing codebases, and understanding larger, more complex repositories. It can also operate more like an autonomous coding assistant, planning and executing multi‑step actions such as updating tickets, editing files, and suggesting fixes, which reduces repetitive work for development teams.

What does it mean that GPT 5.5 is more ‘agentic’?

More ‘agentic’ means GPT 5.5 can go beyond single prompts and responses to plan, sequence, and execute multiple steps inside your tools. For example, it can read documents, call APIs, interact with project management software, and loop through actions until a defined goal is met, acting more like a junior colleague following a brief.

How does GPT 5.5 handle research and complex reasoning tasks?

GPT 5.5 is tuned for deeper research workflows: it can read longer documents, synthesise findings, compare sources, and keep track of multi‑step reasoning chains. This makes it more reliable for tasks like drafting reports, analysing policies, or exploring technical questions where earlier models might lose context or make more errors.

Is GPT 5.5 more cost‑effective than previous models?

Yes, GPT 5.5 is designed to be more token‑efficient, meaning it often needs fewer tokens to achieve equal or higher quality outputs than GPT‑5.4. For businesses running ongoing workloads like coding assistance, research, and content creation, this typically translates into lower API spend for the same or better performance.

How can Australian businesses use GPT 5.5 in day‑to‑day operations?

Australian businesses can use GPT 5.5 to power AI assistants for coding, customer support, research, documentation, and internal knowledge search. With its improved tool use, it can integrate into CRMs, project management systems, and internal apps to automate updates, draft responses, and surface relevant information for staff.

What is LYFE AI and how do you use GPT 5.5 for clients?

LYFE AI is an Australian AI consultancy that builds secure, customised AI assistants and agents for local businesses. We integrate models like GPT 5.5 into your existing systems and data, so the assistant can safely handle company‑specific tasks such as support triage, coding help, documentation, and operations workflows.

Can GPT 5.5 be used to improve customer support in my business?

GPT 5.5 can power AI support agents that understand your knowledge base, answer complex customer questions, and update tickets or CRM records automatically. With better reasoning and tool use, it can handle more of the end‑to‑end process, escalating only truly complex or sensitive issues to human staff.

How do I get started using GPT 5.5 with LYFE AI?

To get started, LYFE AI typically runs a discovery session to understand your systems, data, and key workflows, then designs an AI assistant or agent powered by GPT 5.5 around those needs. We handle integration with the OpenAI API, security and data controls, and ongoing tuning so the assistant stays accurate and useful in your environment.